Headroom: Revolutionizing LLM Efficiency with 60-95% Token Consumption Reduction

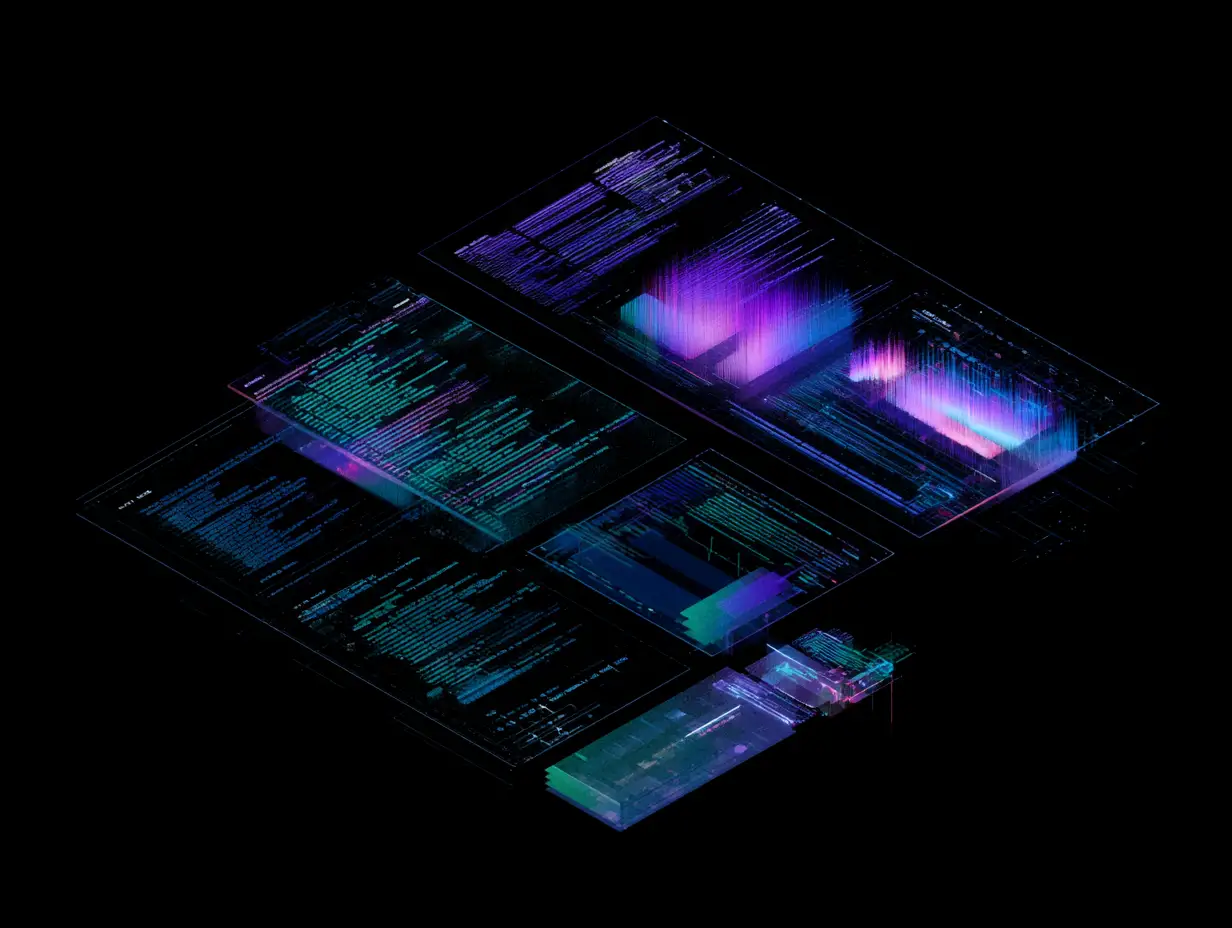

Headroom, a new open-source utility, is making waves in the AI development community by offering a sophisticated compression layer for Large Language Models (LLMs). By targeting data before it reaches the model—specifically tool outputs, logs, files, and RAG (Retrieval-Augmented Generation) chunks—Headroom enables a massive reduction in token consumption, ranging from 60% to as high as 95%. Crucially, the tool maintains the integrity of the results, ensuring that the model's performance remains consistent despite the significantly smaller input size. With support for libraries, proxies, and Model Context Protocol (MCP) servers, Headroom provides a versatile solution for developers looking to optimize costs and manage context window constraints in modern AI applications.

.png&w=3840&q=75)