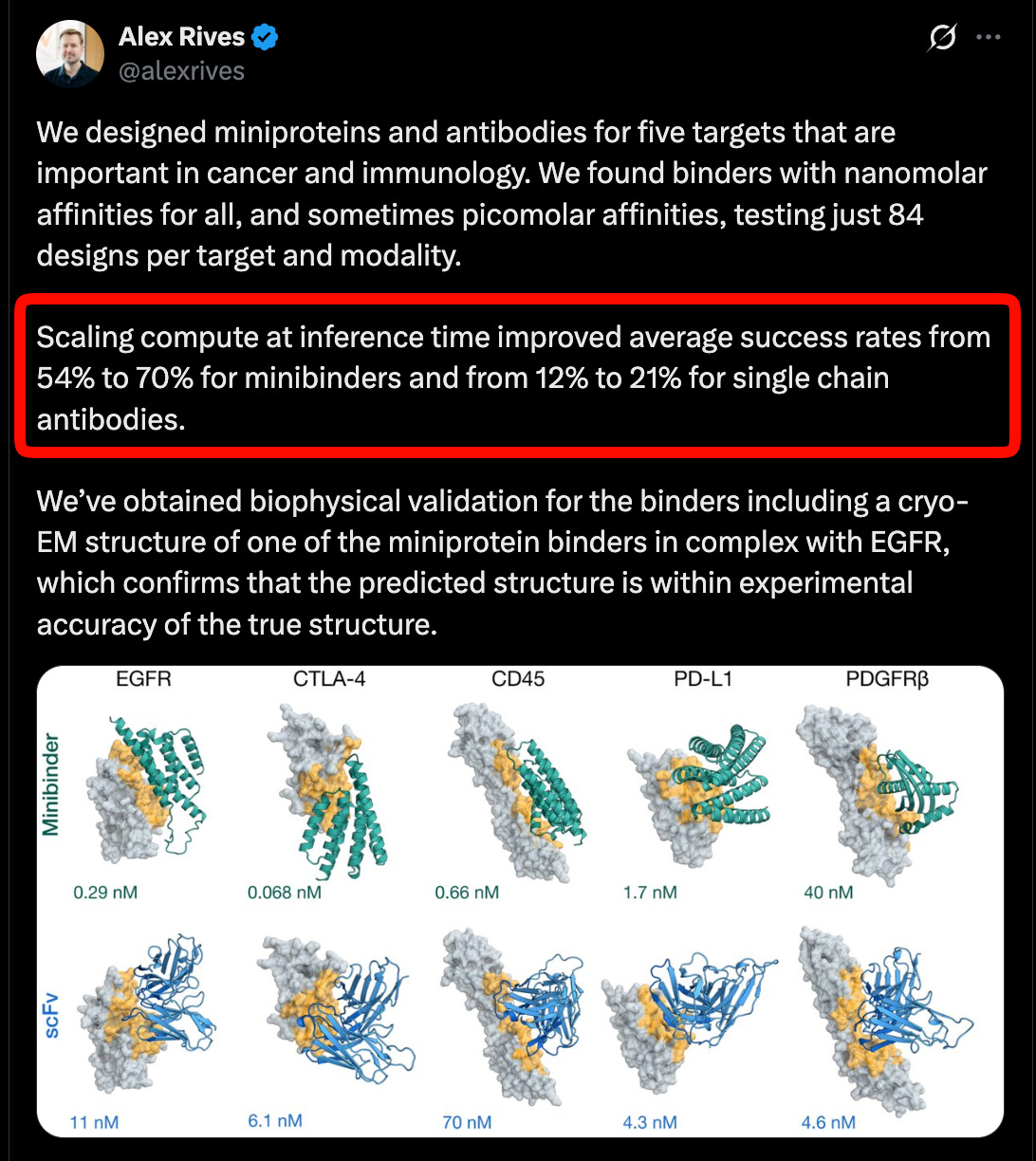

EMO: Pretraining Mixture of Experts for Emergent Modularity Research Announced on Hugging Face Blog

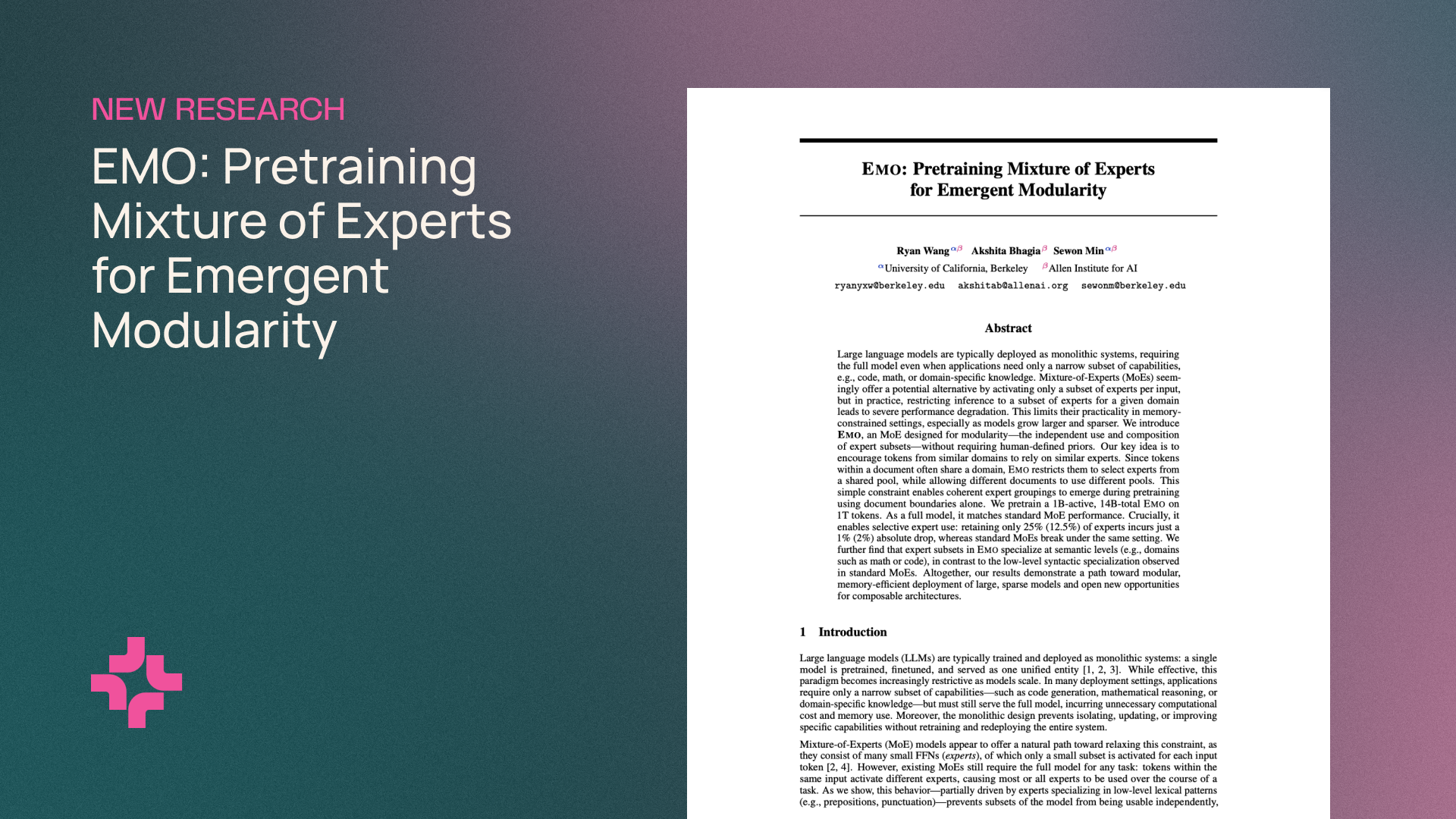

The Hugging Face Blog has published a new research entry titled 'EMO: Pretraining mixture of experts for emergent modularity.' This work, dated May 8, 2026, explores the intersection of Mixture of Experts (MoE) architectures and the development of modularity during the pretraining phase of AI models. While the specific technical data and experimental results are contained within the full blog post, the title indicates a significant focus on how modular structures can emerge naturally within MoE frameworks. This research contributes to the ongoing evolution of efficient, large-scale machine learning models by focusing on the 'EMO' methodology to enhance structural organization during initial training stages.

Key Takeaways

- Introduction of 'EMO,' a research project focused on pretraining Mixture of Experts (MoE) models.

- The primary objective involves achieving 'emergent modularity' within neural network architectures.

- The research was officially documented and shared via the Hugging Face Blog on May 8, 2026.

In-Depth Analysis

Understanding EMO and Mixture of Experts

The title 'EMO: Pretraining mixture of experts for emergent modularity' highlights a specialized focus on Mixture of Experts (MoE) architectures. In the field of artificial intelligence, MoE is a design paradigm where a model consists of multiple 'experts,' each specializing in different aspects of the data. The EMO research appears to target the pretraining phase, which is the initial stage where a model learns general patterns from a massive dataset. By focusing on this stage, EMO likely proposes a method to better organize or initialize these experts to improve overall model performance and efficiency.

The Role of Emergent Modularity

A critical component of this research is the concept of 'emergent modularity.' In traditional AI development, modularity is often a result of manual architectural constraints. However, 'emergent' modularity suggests that the EMO pretraining process allows the model to naturally organize itself into functional modules without explicit, rigid programming for every sub-task. This approach could potentially lead to AI systems that are more adaptable and easier to fine-tune, as the underlying structure is optimized for specialized processing during the very first steps of its creation.

Industry Impact

The announcement of EMO on a platform as prominent as the Hugging Face Blog signifies its relevance to the broader AI research community. As the industry moves toward increasingly large models, the efficiency of Mixture of Experts (MoE) becomes paramount. Research into emergent modularity helps address the challenges of computational overhead and model complexity. By refining how these models are pretrained, EMO could influence future standards for building scalable, high-performance AI systems that maintain a high degree of functional organization.

Frequently Asked Questions

Question: What is the main focus of the EMO research?

EMO focuses on the pretraining of Mixture of Experts (MoE) models with a specific emphasis on fostering emergent modularity within the model's structure.

Question: Who published the EMO research findings?

The findings were published on the Hugging Face Blog, a central hub for AI research and open-source machine learning developments.

Question: When was this information released?

The research was published on May 8, 2026.