Hugging Face Unveils Strategic Building Blocks for Foundation Model Training and Inference on AWS Infrastructure

On May 11, 2026, Hugging Face announced a new initiative titled 'Building Blocks for Foundation Model Training and Inference on AWS.' This development focuses on providing a structured framework for developers and enterprises to manage the complex lifecycle of large-scale AI models within the Amazon Web Services (AWS) ecosystem. By focusing on both the training and inference phases, the announcement highlights a comprehensive approach to cloud-based AI development. While the initial report focuses on the foundational components, it signals a significant step in the ongoing collaboration between Hugging Face and AWS to simplify the deployment of foundation models for a broader range of users.

Key Takeaways

- Strategic Framework: Hugging Face has introduced a set of 'building blocks' specifically designed for the AWS environment to handle foundation models.

- End-to-End Support: The initiative covers the two most critical phases of the AI lifecycle: large-scale training and efficient inference.

- Cloud Integration: The focus is on optimizing these processes within Amazon Web Services (AWS), suggesting a deep integration with cloud-native infrastructure.

- Accessibility: The announcement aims to provide the necessary components to streamline the development and deployment of foundation models for developers.

In-Depth Analysis

The Concept of Building Blocks in AI Infrastructure

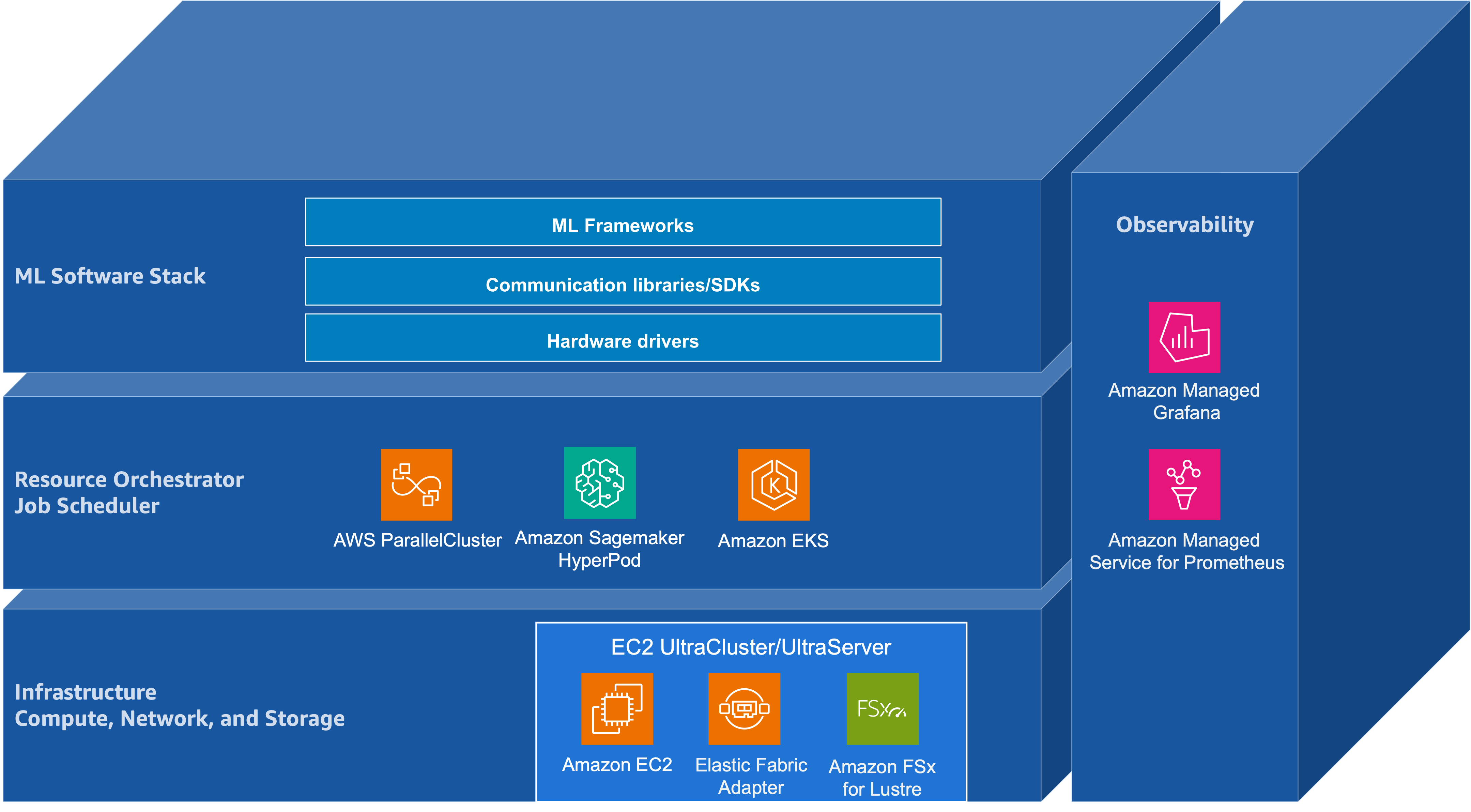

The announcement of 'Building Blocks for Foundation Model Training and Inference on AWS' represents a shift toward modularity in the development of artificial intelligence. In the context of foundation models—which are characterized by their massive scale and multi-purpose utility—the term 'building blocks' refers to the essential architectural components required to manage data, compute, and deployment. By formalizing these blocks on AWS, Hugging Face is addressing the inherent complexity that often acts as a barrier to entry for organizations looking to leverage large-scale AI.

These building blocks likely encompass the necessary configurations and workflows required to synchronize Hugging Face's extensive library of models with the high-performance computing capabilities of AWS. The focus on both training and inference suggests that the initiative is not merely about creating models, but about ensuring they can be sustained and served efficiently in a production environment. This modular approach allows developers to pick and choose the components that fit their specific use cases, whether they are fine-tuning an existing model or deploying a pre-trained one at scale.

Optimizing Training and Inference on AWS

The dual focus on training and inference is critical for the current state of the AI industry. Training foundation models requires immense computational resources and sophisticated orchestration to manage distributed workloads across multiple GPU or accelerator nodes. By providing building blocks for this phase, the collaboration aims to reduce the technical overhead associated with setting up these environments on AWS. This includes the management of data pipelines, checkpointing, and hardware optimization.

On the other side of the lifecycle, inference—the process of using a trained model to make predictions—presents its own set of challenges, particularly regarding latency and cost. The building blocks for inference are designed to help users deploy models in a way that is both responsive and cost-effective. Within the AWS ecosystem, this typically involves leveraging specialized instances and scaling services to handle varying levels of demand. The integration provided by Hugging Face ensures that the transition from a trained model to a live inference endpoint is as seamless as possible.

Industry Impact

The introduction of these building blocks has significant implications for the AI industry, particularly for enterprises that rely on AWS for their cloud infrastructure. First, it reinforces the position of AWS as a primary destination for foundation model development, backed by the community-driven expertise of Hugging Face. This partnership simplifies the 'stack' for AI developers, potentially accelerating the time-to-market for new AI-driven applications.

Furthermore, by standardizing the components needed for training and inference, Hugging Face is helping to democratize access to high-end AI capabilities. Smaller organizations that may not have the specialized engineering talent to build these systems from scratch can now utilize these building blocks to compete with larger tech entities. This move is likely to spur innovation across various sectors as more companies gain the ability to customize and deploy foundation models tailored to their specific needs.

Frequently Asked Questions

Question: What are the 'building blocks' mentioned in the Hugging Face announcement?

Based on the announcement, the building blocks refer to the foundational components and structured workflows required to perform foundation model training and inference specifically on the AWS cloud platform. They are designed to simplify the technical complexities of the AI lifecycle.

Question: Why is the focus on both training and inference important?

Training and inference represent the two major stages of AI development. Training is the resource-intensive process of teaching a model, while inference is the process of using it in real-world applications. Providing building blocks for both ensures that developers have a complete path from initial development to final deployment.

Question: Who is the primary audience for these AWS building blocks?

The primary audience includes AI developers, data scientists, and enterprise engineering teams who use Amazon Web Services and want to leverage Hugging Face's tools to build, optimize, and deploy large-scale foundation models efficiently.