Claude Code Review

Claude Code Review: Agent-Based Deep Code Analysis for Engineering Teams

Claude Code Review is a new, multi-agent system designed to automate deep architectural and bug-catching reviews on Pull Requests. Built for depth rather than speed, it dispatches teams of AI agents to verify bugs, filter false positives, and rank issues by severity. Currently in research preview for Team and Enterprise plans, this tool aims to solve the 'review bottleneck' by providing high-signal feedback that human reviewers might miss, ensuring higher code quality and security across large-scale development projects.

2026-03-13

--K

Claude Code Review Product Information

Claude Code Review: Transforming Pull Requests with Multi-Agent Intelligence

In the modern development lifecycle, code output is accelerating at an unprecedented rate. At Anthropic, code production per engineer has grown by 200% in just one year. This surge has created a significant bottleneck: code review. Many Pull Requests (PRs) receive only surface-level skims rather than the deep, critical analysis required to maintain system integrity.

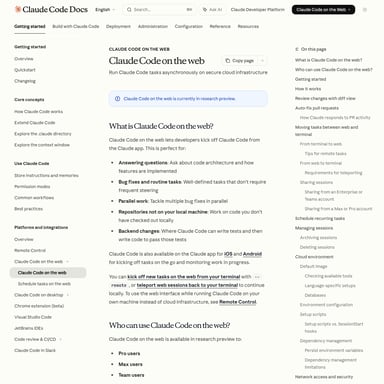

To address this, we are introducing Claude Code Review, a thorough, agent-team-based review system currently available in research preview. Modeled after the internal processes used at Anthropic, this system is designed for depth, catching the critical bugs that human eyes often overlook.

What's Claude Code Review?

Claude Code Review is a sophisticated multi-agent system integrated into the Claude Code ecosystem. Unlike standard automated linters or basic GitHub actions, it dispatches a specialized team of AI agents to every PR. These agents work in parallel to perform deep reads of code changes, verify potential vulnerabilities, and rank findings by severity.

While the existing open-source Claude Code GitHub Action provides a lightweight solution, the new Claude Code Review is a more robust, high-signal alternative built for organizations that prioritize security and architectural depth. It is currently available for users on Claude Team and Enterprise plans.

Features of Claude Code Review

Multi-Agent Analysis

When a PR is initiated, Claude Code Review dispatches multiple agents. This team-based approach allows the system to:

- Look for bugs in parallel: Different agents can focus on different aspects of the codebase.

- Verify findings: Agents cross-check potential issues to filter out false positives.

- Rank by severity: Findings are categorized so developers can prioritize critical fixes.

High-Signal Reporting

Rather than cluttering a PR with noise, the system provides:

- A single, high-signal overview comment summarizing the review.

- Contextual in-line comments for specific bugs and architectural concerns.

Scalable Intensity

The depth of the review automatically scales with the complexity of the PR. A 1,000-line change receives a deep, multi-agent investigation, while a trivial change receives a lightweight pass. On average, a comprehensive review takes approximately 20 minutes.

Administrative Control

Organizations can manage their usage of Claude Code through several governance tools:

- Monthly Organization Caps: Define and limit total monthly spend.

- Repository-Level Control: Select specific repositories where reviews should run.

- Analytics Dashboard: Track acceptance rates, total PRs reviewed, and associated costs.

Use Cases for Claude Code Review

Identifying Critical Logic Flaws

In complex production services, even a one-line change can break authentication or security protocols. Claude Code Review excels at identifying these latent failure modes that are "easy to read past" but obvious once highlighted by an agent.

Refactoring and Legacy Code Support

During refactors—such as ZFS encryption updates—the system can identify pre-existing bugs in adjacent code that the PR happens to touch. This ensures that new changes don't accidentally trigger latent issues like type mismatches or cache failures.

Solving the Review Bottleneck

For teams where developers are stretched thin, Claude Code Review closes the gap. At Anthropic, the implementation of this system increased substantive review comments on PRs from 16% to 54%, allowing human reviewers to focus on final approvals rather than initial bug hunting.

How to Use Claude Code Review

Getting started with the research preview is straightforward for teams already using Claude Code on supported plans.

For Administrators

- Log in to your Claude Code settings.

- Enable the Code Review feature.

- Install the GitHub App and authorize access.

- Select the specific repositories you want the agents to monitor.

For Developers

Once the administrator has enabled the service, no further configuration is needed. The agents will automatically trigger whenever a new PR is opened, delivering their findings directly to the GitHub interface.

FAQ

Q: Does Claude Code Review replace human reviewers?

A: No. The system is designed to assist, not replace. It does not have the authority to approve PRs; that remains a human responsibility. It acts as a powerful first line of defense to surface bugs.

Q: How much does it cost?

A: Reviews are billed based on token usage. Depending on PR size and complexity, costs generally average between $15 and $25 per review.

Q: How accurate are the findings?

A: Internal testing at Anthropic shows that less than 1% of findings surfaced by the agents are marked as incorrect by engineers.

Q: Which plans include access to this feature?

A: It is currently available in research preview for the Claude Team plan and Claude Enterprise plan.

Q: How long does a review take?

A: While it varies by PR complexity, the average turnaround time is approximately 20 minutes.