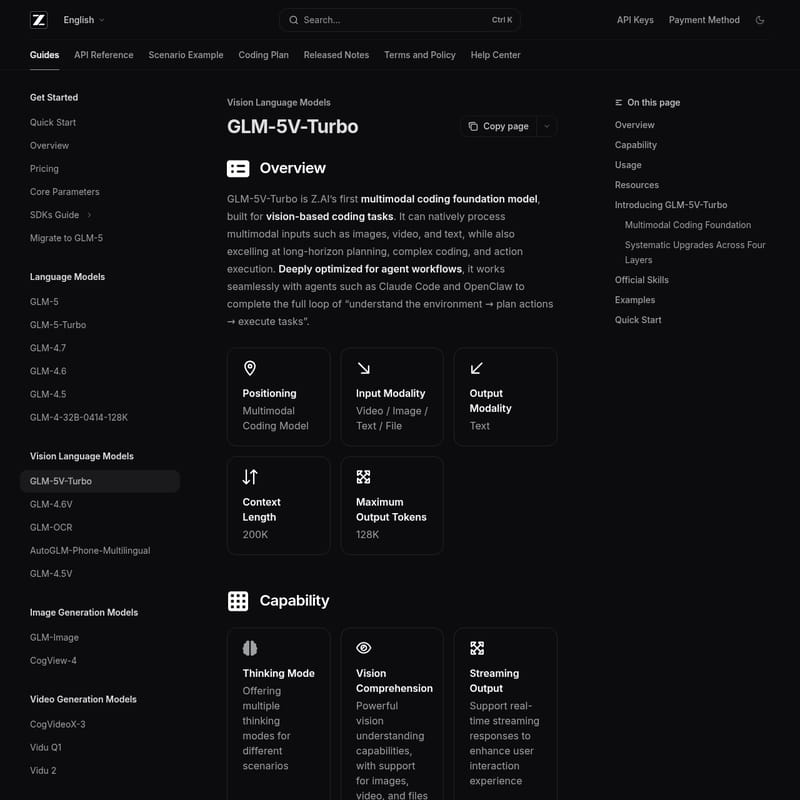

GLM-5V-Turbo

GLM-5V-Turbo: Z.AI's Advanced Multimodal Coding Foundation Model

GLM-5V-Turbo is Z.AI’s premier multimodal coding foundation model designed for vision-based coding and agentic workflows. Supporting image, video, and text inputs, it features a 200K context length and 128K output tokens. It excels at complex coding tasks, GUI exploration, and autonomous action execution, integrating seamlessly with agents like Claude Code and OpenClaw through native multimodal fusion and reinforcement learning.

2026-04-04

--K

GLM-5V-Turbo Product Information

GLM-5V-Turbo: The Advanced Multimodal Coding Foundation Model

What's GLM-5V-Turbo?

GLM-5V-Turbo is Z.AI’s first multimodal coding foundation model, specifically engineered for high-stakes vision-based coding tasks. As a cutting-edge Multimodal Coding Model, GLM-5V-Turbo can natively process a diverse range of inputs, including video, images, text, and files.

This model is built to excel at long-horizon planning, complex coding, and precise action execution. By being deeply optimized for agent workflows, GLM-5V-Turbo works seamlessly with specialized agents such as Claude Code and OpenClaw. This integration allows the model to complete the full loop of understanding an environment, planning strategic actions, and executing tasks efficiently. With a massive 200K context length and a maximum output capacity of 128K tokens, GLM-5V-Turbo represents a significant leap in multimodal AI capabilities.

Features of GLM-5V-Turbo

Core Technical Specifications

- Input Modality: Video, Image, Text, and File support.

- Output Modality: High-quality Text generation.

- Context Length: Massive 200K window for long-form data processing.

- Maximum Output: Up to 128K tokens per response.

Advanced Capabilities

- Thinking Mode: Offers multiple thinking modes tailored for different operational scenarios.

- Vision Comprehension: Powerful understanding of visual assets, including video and document files.

- Streaming Output: Supports real-time streaming to enhance the interactive user experience.

- Function Call: Robust tool invocation capabilities for integration with external toolsets.

- Context Caching: Intelligent caching mechanisms to optimize performance during long-running conversations.

- Native Multimodal Fusion: Uses the CogViT vision encoder and MTP architecture for superior reasoning efficiency.

Official Skills

GLM-5V-Turbo comes equipped with specialized skills available via ClawHub, including:

- Image Captioning

- Visual Grounding

- Document-Grounded Writing

- Resume Screening

- Prompt Generation

Use Case Scenarios for GLM-5V-Turbo

GLM-5V-Turbo is designed for high-performance agentic and coding tasks:

- Frontend Recreation: Replicating mobile pages or website layouts based solely on design mockups and images.

- GUI Autonomous Exploration: Navigating and operating in real GUI environments like AndroidWorld and WebVoyager.

- Code Debugging: Identifying and fixing complex code issues through multimodal understanding.

- Deep Research and Search: Utilizing multimodal tools like box drawing, screenshots, and webpage reading for comprehensive data retrieval.

- Video Object Tracking: Identifying and tracking specific objects within video files for analysis.

How to Use GLM-5V-Turbo

Developers can access GLM-5V-Turbo through the Z.AI API. Below are examples of how to initiate a basic and streaming call.

Basic Call (cURL)

curl -X POST \

https://api.z.ai/api/paas/v4/chat/completions \

-H "Authorization: Bearer your-api-key" \

-H "Content-Type: application/json" \

-d '{

"model": "glm-5v-turbo",

"messages": [

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://example.com/image.png"

}

},

{

"type": "text",

"text": "Where is the second bottle of beer from the right? Provide coordinates in [[xmin,ymin,xmax,ymax]] format"

}

]

}

],

"thinking": {

"type":"enabled"

}

}'

Streaming Call (cURL)

To enable real-time responses, simply add the "stream": true parameter to your request header as shown in the GLM-5V-Turbo documentation.

FAQ

What makes GLM-5V-Turbo different from standard language models? Unlike pure-text models, GLM-5V-Turbo is a native multimodal foundation model. It integrates vision and coding capabilities through a systematic four-layer upgrade involving CogViT encoders and joint reinforcement learning across 30+ task types.

What platforms support GLM-5V-Turbo agents? The model is optimized for agentic workflows and its skills are currently available on ClawHub for installation.

How does the model handle visual grounded tasks? GLM-5V-Turbo uses an expanded multimodal toolchain that includes webpage reading and box drawing, allowing it to provide precise coordinates and descriptions for objects found in images or videos.

What is the maximum context length for GLM-5V-Turbo? The model supports a context length of 200K tokens, making it ideal for processing large codebases or long video files.