RuView: Transforming WiFi Signals into Real-Time Human Pose Estimation and Vital Sign Monitoring Without Cameras

RuView, a groundbreaking project by ruvnet, introduces WiFi DensePose technology to convert standard commercial WiFi signals into comprehensive human data. By leveraging existing wireless infrastructure, the system achieves real-time pose estimation, vital sign monitoring, and presence detection without the use of a single video pixel. This privacy-centric approach allows for sophisticated spatial awareness and health tracking by analyzing signal disruptions rather than visual imagery. As a significant advancement in non-invasive monitoring, RuView offers a unique solution for environments where privacy is paramount, effectively turning ubiquitous WiFi signals into a sophisticated sensor network for human activity and health metrics.

Key Takeaways

- Privacy-First Monitoring: RuView utilizes WiFi DensePose technology to perform human sensing without capturing any video pixels or visual data.

- Multi-Functional Sensing: The system provides real-time capabilities for pose estimation, vital sign monitoring, and presence detection.

- Commercial Hardware Compatibility: The technology is designed to work with standard commercial WiFi signals, lowering the barrier for widespread adoption.

- Real-Time Processing: RuView transforms wireless signal data into actionable human insights instantaneously.

In-Depth Analysis

WiFi DensePose: The Core Technology

RuView leverages the principles of WiFi DensePose to interpret how human bodies interact with wireless signals. Unlike traditional camera-based systems that rely on light and optics, RuView analyzes the fluctuations and reflections of WiFi signals as they bounce off individuals. This allows the system to map the human form and its movements in a 3D space. Because it does not rely on visual sensors, it can operate in total darkness and through certain physical obstructions, providing a robust alternative to computer vision.

Beyond Presence: Vital Signs and Pose Estimation

While simple motion sensors can detect presence, RuView extends this capability into high-fidelity monitoring. By processing commercial WiFi signals, the system can extract subtle patterns corresponding to vital signs, such as breathing or heart rate, alongside complex pose estimation. This dual capability makes it a versatile tool for both security and healthcare applications, where understanding the specific posture and physiological state of a person is critical without infringing on their visual privacy.

Industry Impact

The emergence of RuView signifies a major shift in the AI and IoT industries toward "invisible" sensing. By removing the need for cameras, RuView addresses the growing public concern over surveillance and data privacy. For the healthcare industry, this technology enables continuous, non-intrusive monitoring of patients or the elderly in private spaces like bedrooms or bathrooms. In the smart home and security sectors, it provides a way to implement sophisticated presence and activity tracking without the bandwidth and privacy costs associated with high-definition video streaming.

Frequently Asked Questions

Question: Does RuView require specialized hardware to function?

No, RuView is designed to transform standard commercial WiFi signals into sensing data, suggesting it can leverage existing wireless infrastructure.

Question: How does RuView maintain user privacy?

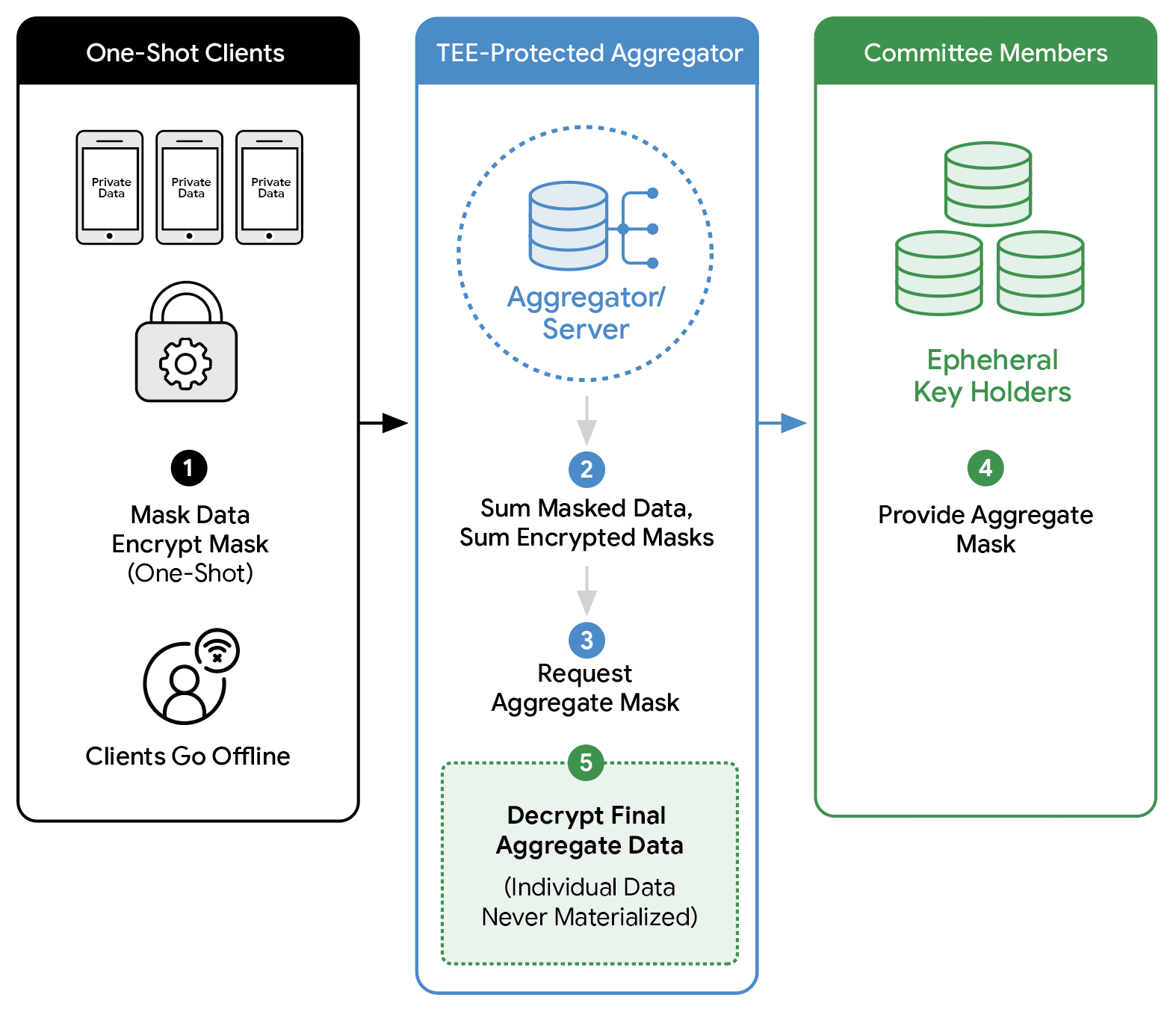

RuView maintains privacy by operating entirely without video pixels. It uses radio frequency signals to estimate poses and vitals, ensuring that no visual images of individuals are ever captured or stored.

Question: What specific metrics can RuView monitor?

RuView is capable of real-time pose estimation, monitoring vital signs, and detecting the presence of individuals within a WiFi-enabled environment.