Meta Superintelligence Labs Debuts Muse Spark: The First Frontier Model Built on a New Technology Stack

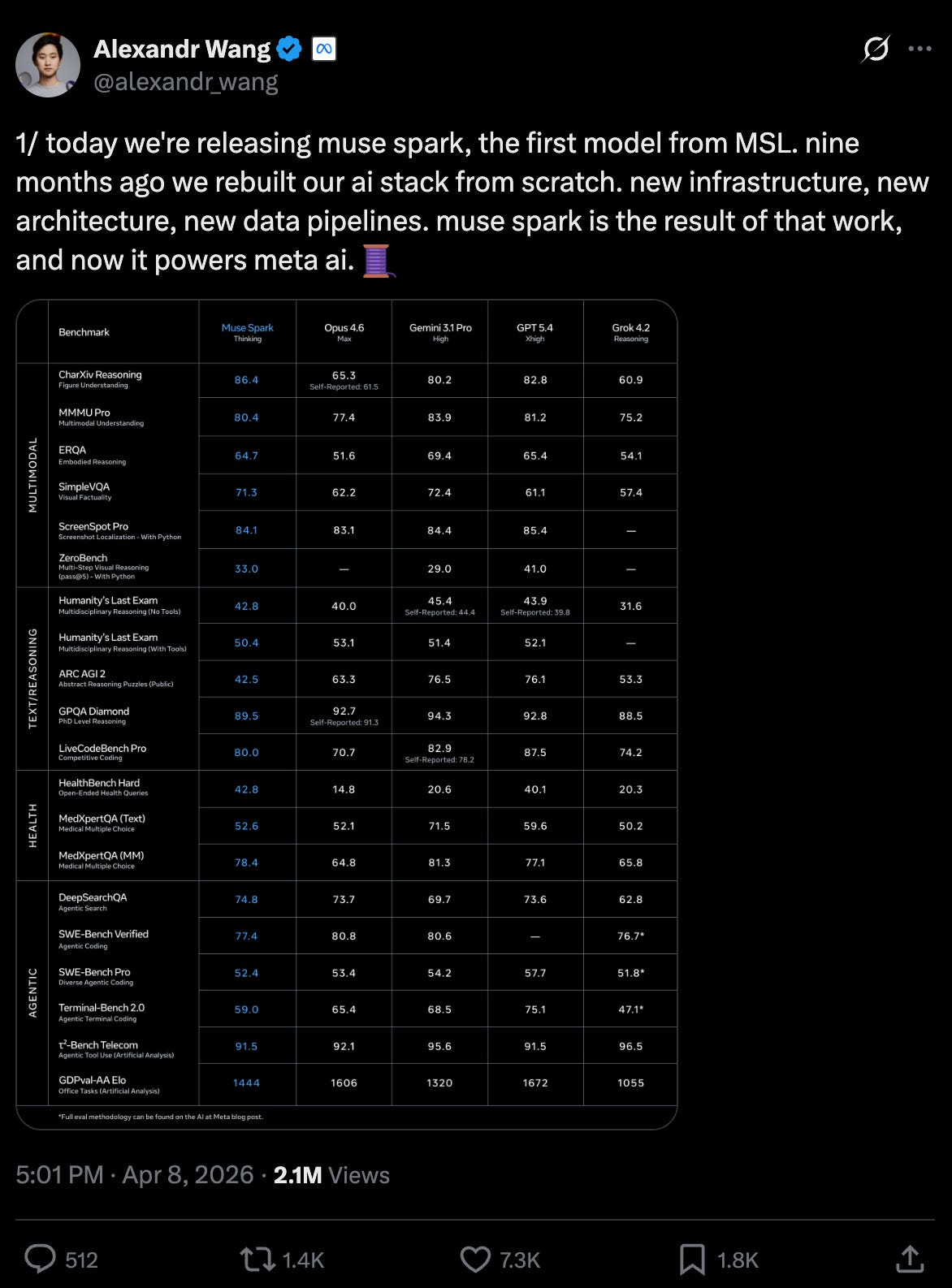

Meta Superintelligence Labs (MSL) has officially announced the release of Muse Spark, marking a significant milestone as the first frontier model developed on the organization's entirely new technology stack. The launch follows a period of anticipation, with the industry observing MSL's progress toward shipping this foundational update. While specific technical specifications remain closely guarded, the transition to a completely new stack suggests a fundamental shift in how MSL approaches large-scale model architecture and deployment. This release represents the culmination of internal development efforts aimed at establishing a fresh baseline for frontier AI capabilities, signaling a new chapter for Meta Superintelligence Labs' contributions to the evolving AI landscape.

Key Takeaways

- Official Launch: Meta Superintelligence Labs (MSL) has officially shipped Muse Spark.

- New Architecture: Muse Spark is the first frontier model built on MSL’s completely new technology stack.

- Strategic Milestone: The release marks the end of a development period and the beginning of a new operational phase for the lab.

In-Depth Analysis

The Arrival of Muse Spark

Meta Superintelligence Labs (MSL) has reached a pivotal moment with the shipping of Muse Spark. As the first frontier model to emerge from their latest development cycle, Muse Spark represents more than just an incremental update; it is the debut of a new era for the lab's output. The announcement highlights that the team has successfully moved from the development phase to delivery, fulfilling expectations surrounding their long-term projects.

A Completely New Stack

The most significant aspect of this release is the underlying infrastructure. Muse Spark is built on a "completely new stack," which implies that MSL has overhauled its previous methodologies, frameworks, or hardware integrations. By moving away from legacy systems and onto this fresh foundation, MSL is positioning its frontier models to leverage new efficiencies or capabilities that were previously unattainable on their older technology stacks.

Industry Impact

The shipping of Muse Spark by Meta Superintelligence Labs signals a shift in the competitive landscape of frontier models. By introducing a new stack, MSL is demonstrating a commitment to foundational innovation rather than just scaling existing architectures. This move may prompt other industry players to re-evaluate their own technology stacks to ensure they can compete with the performance or efficiency gains promised by MSL's new approach. The successful deployment of this model serves as a benchmark for the lab's ability to execute complex, ground-up engineering projects in the high-stakes field of superintelligence research.

Frequently Asked Questions

Question: What is Muse Spark?

Muse Spark is the latest frontier model released by Meta Superintelligence Labs (MSL), distinguished by being the first model built on their entirely new technology stack.

Question: Why is the "new stack" significant for MSL?

The new stack represents a fundamental change in MSL's development infrastructure, indicating that the lab has moved to a new architectural foundation for its future frontier models.

Question: Who developed Muse Spark?

Muse Spark was developed and shipped by Meta Superintelligence Labs (MSL).