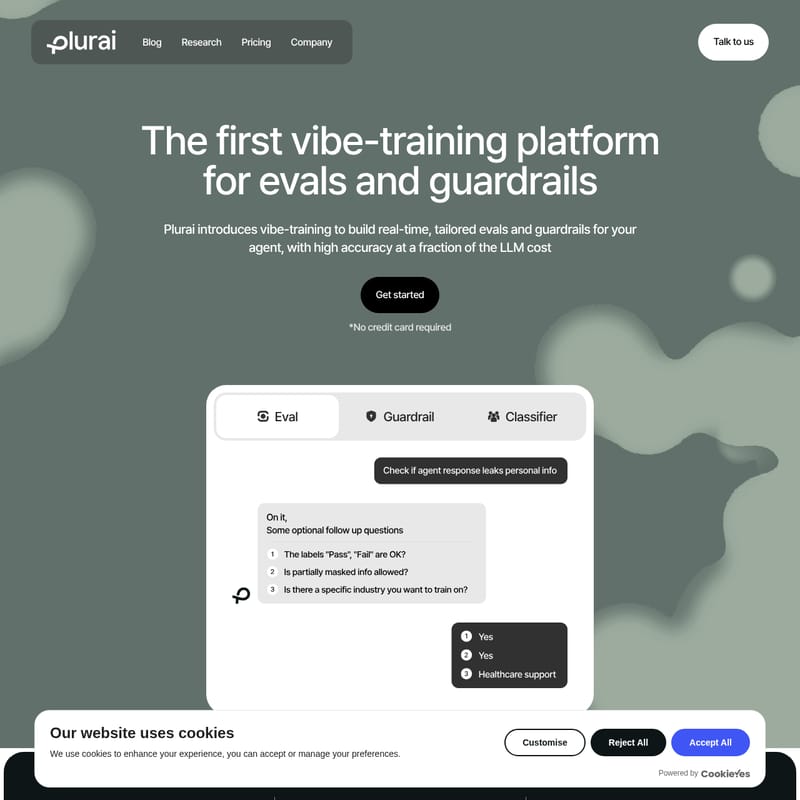

Plurai

Plurai: The First Vibe-Training Platform for High-Accuracy AI Evals and Guardrails

Plurai is a revolutionary vibe-training platform designed to build real-time, tailored evals and guardrails for AI agents. By utilizing purpose-built Small Language Models (SLMs) and optimized LLM evaluators, Plurai achieves a 43% failure rate reduction and an 8x cost reduction compared to GPT 5.2. With sub-100ms latency, intent calibration, and synthetic data generation, Plurai enables production-grade AI safety and performance across on-prem and VPC deployments.

2026-05-01

--K

Plurai Product Information

Plurai: The Industry-Leading Vibe-Training Platform for AI Evals and Guardrails

In the rapidly evolving landscape of artificial intelligence, ensuring that AI agents perform reliably is a critical challenge. Plurai introduces the world's first vibe-training platform specifically engineered for building real-time, tailored evals and guardrails. By focusing on accuracy, cost-efficiency, and low latency, Plurai allows developers to bring their agents to a real-world production level without the traditional speed versus safety tradeoff.

What’s Plurai?

Plurai is a comprehensive platform that introduces vibe-training to the world of AI development. It is designed to help developers create high-quality evals and guardrails that are specifically tailored to the unique needs of their agents. Unlike general-purpose models, Plurai focuses on high accuracy at a fraction of the cost typically associated with Large Language Models (LLMs).

Plurai enables production-grade coverage by replacing expensive and slow "LLM-as-judge" approaches with optimized Small Language Models (SLMs). Whether you are working on conversation evaluation, grounding validation, or policy compliance, Plurai provides the infrastructure to run evaluations continuously and at scale.

Core Features of the Plurai Platform

Plurai is built on a foundation of performance and efficiency. Below are the key features that set the Plurai platform apart from traditional evaluation methods:

1. High Accuracy and Failure Rate Reduction

Plurai is engineered for precision. When compared to models like GPT 5.2, Plurai’s purpose-built evaluators achieve a failure rate reduction of over 43%. This high level of accuracy ensures that your evals and guardrails are reliable enough for mission-critical production environments.

2. Significant Cost Reduction

One of the biggest hurdles in AI development is the cost of continuous evaluation. Plurai addresses this by providing a cost reduction of over 8x compared to GPT 5.2. By utilizing optimized Small Language Models (SLMs), Plurai allows you to achieve full production coverage without the massive overhead of traditional LLM-based judges.

3. Ultra-Low Inference Latency

For real-time guardrails, speed is essential. Plurai’s models boast an inference latency of less than 100ms. This rapid response time ensures that your agent remains safe and compliant in real-time interactions, eliminating the lag that often plagues AI safety layers.

4. Purpose-Built SLMs and Optimized LLMs

Plurai offers a dual approach to model selection:

- Small Language Models (SLMs): These are purpose-built for specific tasks through intent calibration and are ideal for large-scale testing and real-time guardrails due to their efficiency.

- Optimized LLMs: For sampled data and offline evaluation workflows where maximum accuracy is required, Plurai provides LLM-based evaluators at a competitive cost.

5. Intent Calibration and Synthetic Data Generation

Plurai does not require prior labeled data to get started. Through a proprietary intent calibration process, the platform deeply understands your specific tasks. If you lack historical datasets, Plurai can generate high-fidelity synthetic data tailored to your use case, ensuring your evaluators are trained on high-quality, relevant information.

6. Secure On-Prem and VPC Deployment

For organizations with strict security and data control requirements, Plurai can be deployed within your Virtual Private Cloud (VPC) or on-premise. This deployment model not only enhances security but also further lowers latency and provides complete control over your infrastructure.

Use Case Scenarios for Plurai

The flexibility of the Plurai platform, including its Proton product, allows it to be used across a wide range of semantic tasks. Below are common use cases where Plurai’s evals and guardrails excel:

- Conversation Evaluation: Analyze and score the quality of agent-user interactions to ensure brand alignment and effectiveness.

- Semantic Similarity: Measure how closely an agent's response aligns with intended meanings or reference materials.

- Grounding Validation: Verify that the information provided by an AI agent is factually grounded in the provided source material, reducing hallucinations.

- Policy Compliance: Implement real-time guardrails to ensure that AI agents adhere to strict corporate or legal policies during live interactions.

- Large-Scale Testing: Run continuous evaluations across massive datasets to monitor performance over time without incurring prohibitive costs.

FAQ

How do I use the evals and guardrails on my agents? You can use Plurai models across a wide range of semantic tasks, including conversation evaluation, semantic similarity, grounding validation, policy compliance, and more. You can explore the use case catalog provided by Plurai to see the full scope of possibilities.

How is Plurai different from the evals I already have? Plurai uses a proprietary intent calibration process to deeply understand your task, generating a high-quality testing set and a consistent evaluator. Unlike traditional LLM-as-judge approaches—which are expensive, slow, and difficult to run at full production coverage—Plurai leverages optimized Small Language Models (SLMs) that are cost-efficient and scalable.

Can Plurai be deployed on-prem? Yes. Plurai can be deployed in your VPC for maximum security, data control, and even lower latency. You can contact the Plurai team to discuss your specific infrastructure and deployment requirements.

What makes Plurai’s SLMs so accurate and cost-effective? Plurai’s SLMs are purpose-built for specific tasks through intent calibration and synthetic data generation. Because the models are trained on highly targeted datasets rather than general-purpose datasets, they achieve high accuracy with far lower latency and cost. This allows for production-grade coverage that can run continuously.

Do you only have SLMs or other models as well? In addition to purpose-built SLMs, Plurai offers optimized LLM-based evaluators for maximum accuracy at competitive costs. These are ideal for sampled data and offline evaluation workflows. For real-time applications, SLMs remain the recommended choice.

Is Plurai’s Proton product only for evals and guardrails? No. You can use Proton models across various semantic tasks, including grounding validation, semantic similarity, and policy compliance. It is a versatile tool for ensuring the overall quality of your AI agent.

Get Started with Plurai

Ready to eliminate the speed vs. safety tradeoff in your AI development process? You can get started with Plurai today—no credit card is required. Whether you want to test the public FAQ or request a demo, Plurai provides the tools to bring your agent to a real-world level immediately.

- Failure rate reduction: >43% vs GPT 5.2

- Cost reduction: >8x vs GPT 5.2

- Inference latency: <100ms

Experience the power of vibe-training and optimized SLMs to secure and scale your AI agents with Plurai.