GLM-5-Turbo

GLM-5-Turbo: OpenClaw Native Foundation Model for Complex Agent Tasks

GLM-5-Turbo is a cutting-edge foundation model from Z.AI, specifically engineered for the OpenClaw ecosystem. Optimized for long-chain execution, tool invocation, and complex instruction following, it supports a 200K context length and 128K maximum output tokens. Whether you are building autonomous agents for data analysis, software development, or scheduled tasks, GLM-5-Turbo provides the stability and reasoning required for professional-grade AI workflows.

2026-03-18

--K

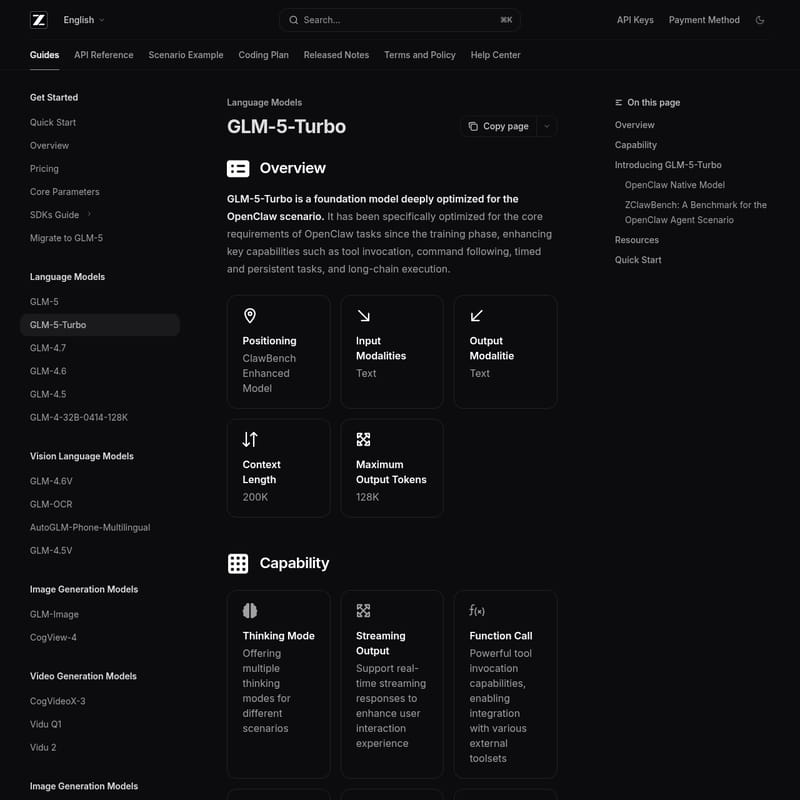

GLM-5-Turbo Product Information

GLM-5-Turbo: Optimized Foundation Model for the OpenClaw Ecosystem

In the rapidly evolving landscape of artificial intelligence, the GLM-5-Turbo stands out as a specialized foundation model deeply optimized for the OpenClaw scenario. Developed by Z.AI, this model is engineered from the ground up to handle the core requirements of autonomous agents, including tool invocation, complex command following, and persistent long-chain task execution. By bridging the gap between dialogue and actionable execution, GLM-5-Turbo empowers developers to build more reliable and sophisticated AI-driven workflows.

What's GLM-5-Turbo?

GLM-5-Turbo is a text-based foundation model specifically positioned as a "ClawBench Enhanced Model." Unlike general-purpose models, GLM-5-Turbo has been refined since the training phase to excel in the OpenClaw environment. It serves as a robust engine for agents that require high-throughput logic and stable execution over long durations.

Key specifications of GLM-5-Turbo include:

- Input Modalities: Text

- Output Modalities: Text

- Context Length: 200K tokens

- Maximum Output Tokens: 128K tokens

By focusing on the OpenClaw Native Model approach, Z.AI ensures that GLM-5-Turbo can decompose multi-layered instructions and maintain execution continuity, making it a superior choice for professional productivity and operational engineering.

Features of GLM-5-Turbo

GLM-5-Turbo offers a suite of advanced capabilities designed for modern agentic workflows:

1. Advanced Thinking Modes

The model offers multiple Thinking Modes tailored for different scenarios, allowing for deeper reasoning and more accurate problem-solving when faced with complex queries.

2. Precise Tool Calling and Function Calls

One of the standout features of GLM-5-Turbo is its ability to perform Function Calling with high precision. It ensures stable invocation of external toolsets, effectively transitioning tasks from simple conversation to real-world execution without failures.

3. Context Caching and Structured Output

To optimize performance in long conversations, GLM-5-Turbo utilizes an intelligent Context Caching mechanism. Additionally, it supports Structured Output (such as JSON), which is essential for seamless system integration and data processing.

4. OpenClaw Native Optimization

From training data construction to optimization objectives, GLM-5-Turbo is built for real-world agent workflows. It excels in:

- Instruction Following: Superior decomposition of multi-layered instructions.

- Scheduled and Persistent Tasks: Better understanding of time dimensions and uninterrupted execution.

- High-Throughput Long Chains: Faster and more stable performance for data-intensive logic.

Use Case Scenarios

GLM-5-Turbo is designed for a diverse range of users, from developers to research analysts. Common use cases include:

- Software Development: Automating environment setup and code generation within the OpenClaw ecosystem.

- Data Analysis and Information Retrieval: Handling high-throughput data chains for financial professionals and operations engineers.

- Content Creation: Enabling content creators to generate structured materials using modular "Skills."

- Scheduled Operations: Managing tasks that require time-related triggers and persistent, continuous execution.

- Multi-Agent Collaboration: Facilitating task division and collaborative planning among various AI agents.

How to Use GLM-5-Turbo

Integrating GLM-5-Turbo into your project is straightforward thanks to multiple SDK supports. Below are examples of how to get started.

Using the Official Python SDK

First, install the SDK:

pip install zai-sdk

Then, implement a basic call:

from zai import ZaiClient

client = ZaiClient(api_key="your-api-key")

response = client.chat.completions.create(

model="glm-5-turbo",

messages=[

{"role": "user", "content": "Z.AI Open Platform"}

],

thinking={"type": "enabled"},

max_tokens=4096,

temperature=1.0

)

print(response.choices[0].message)

Using cURL for Basic API Calls

curl -X POST "https://api.z.ai/api/paas/v4/chat/completions" \

-H "Content-Type: application/json" \

-H "Authorization: Bearer your-api-key" \

-d '{

"model": "glm-5-turbo",

"messages": [{"role": "user", "content": "Z.AI Open Platform"}],

"thinking": {"type": "enabled"},

"max_tokens": 4096

}'

FAQ

Q: What makes GLM-5-Turbo different from the standard GLM-5? A: GLM-5-Turbo is specifically optimized for OpenClaw scenarios. It shows substantial improvements in tool calling stability, instruction decomposition, and long-chain task execution as measured by the ZClawBench benchmark.

Q: What is ZClawBench? A: ZClawBench is an end-to-end benchmark introduced by Z.AI to evaluate model performance specifically within the OpenClaw agent ecosystem, covering tasks like environment setup, data analysis, and content creation.

Q: Does GLM-5-Turbo support streaming? A: Yes, it supports Streaming Output to enhance user interaction experience by providing real-time responses.

Q: Can I use GLM-5-Turbo with the OpenAI SDK?

A: Yes, you can use the OpenAI Python SDK by pointing the base_url to https://api.z.ai/api/paas/v4/ and using your Z.AI API key.