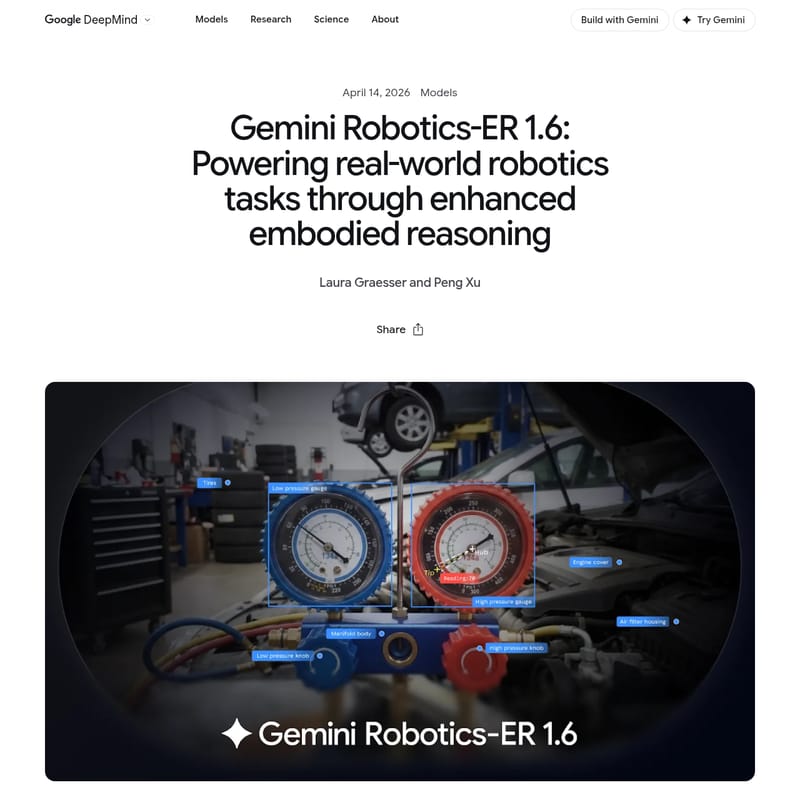

Gemini Robotics ER 1.6

Gemini Robotics-ER 1.6: Advanced Embodied Reasoning Model for Next-Generation Autonomous Systems

Gemini Robotics-ER 1.6 is Google DeepMind's latest reasoning-first AI model designed for physical agents. It bridges the gap between digital intelligence and physical action through enhanced embodied reasoning, spatial understanding, and multi-view perception. This sophisticated model excels in tasks like precise pointing, success detection, and complex instrument reading, allowing robots to navigate and interact with the real world autonomously. By integrating agentic vision and code execution, Gemini Robotics-ER 1.6 provides developers with a robust toolset for building safer, more capable robotics applications. Available via the Gemini API and Google AI Studio, it represents a significant leap over previous generations in spatial logic, physical safety constraint compliance, and real-world industrial utility.

2026-04-17

4704.8K

Gemini Robotics ER 1.6 Product Information

Gemini Robotics-ER 1.6: Revolutionizing Embodied Reasoning in AI

In the rapidly evolving landscape of artificial intelligence, Gemini Robotics-ER 1.6 emerges as a groundbreaking advancement in the field of physical agents. Developed by Google DeepMind, this next-generation model is specifically designed to empower robots with the ability to reason about the physical world, moving beyond simple instruction following to true embodied reasoning.

What's Gemini Robotics-ER 1.6?

Gemini Robotics-ER 1.6 is a reasoning-first AI model that enables robots to understand and interact with their environments with unprecedented precision. It acts as a high-level reasoning engine for robotic systems, bridging the gap between digital intelligence and physical action. Unlike standard language models, Gemini Robotics-ER 1.6 specializes in spatial and physical reasoning, allowing robots to interpret complex visual data, plan tasks, and detect success in real-world scenarios.

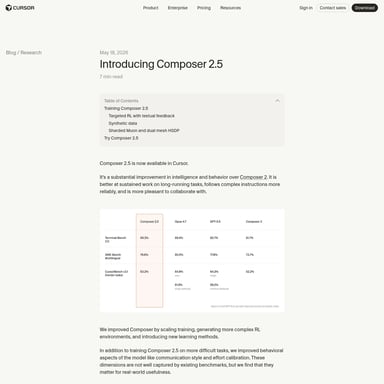

Built upon the foundations of the Gemini architecture, this model natively calls tools such as Google Search, vision-language-action models (VLAs), and third-party user-defined functions to execute sophisticated operations. It represents a significant upgrade over Gemini Robotics-ER 1.5 and Gemini 3.0 Flash, particularly in areas like spatial logic and instrument reading.

Features of Gemini Robotics-ER 1.6

Enhanced Embodied Reasoning

The core of Gemini Robotics-ER 1.6 is its ability to perform embodied reasoning. This allows a robot to navigate complex facilities, interpret physical gauges, and adapt to dynamic environments by reasoning through spatial and physical constraints.

Precision Pointing and Spatial Logic

Pointing is a fundamental capability in this model. Gemini Robotics-ER 1.6 uses points to express:

- Spatial reasoning: Precision object detection and counting.

- Relational logic: Comparing objects and defining "from-to" relationships.

- Motion reasoning: Identifying optimal grasp points and mapping trajectories.

- Constraint compliance: Reasoning through complex prompts to identify objects that fit specific physical criteria.

Multi-View Success Detection

Autonomy requires knowing when a task is complete. Gemini Robotics-ER 1.6 advances multi-view reasoning, integrating data from multiple camera streams (such as overhead and wrist-mounted feeds) to determine task success even in occluded or poorly lit environments.

Agentic Vision and Instrument Reading

A standout feature of Gemini Robotics-ER 1.6 is its ability to read analog and digital instruments. By combining visual reasoning with code execution—a process known as agentic vision—the model can zoom into images, estimate proportions on gauges, and interpret liquid levels in sight glasses with sub-tick accuracy.

Superior Safety Compliance

Safety is integrated at every level. Gemini Robotics-ER 1.6 is the safest robotics model to date, showing improved adherence to physical safety constraints and better identification of safety hazards in both text and video scenarios compared to Gemini 3.0 Flash.

Use Case Scenarios

Industrial Facility Inspection

In collaboration with Boston Dynamics, Gemini Robotics-ER 1.6 is utilized by robots like Spot to monitor industrial instruments. The model allows the robot to visit thermometers and pressure gauges, interpret their readings autonomously, and react to potential challenges in a facility setting.

Complex Object Manipulation

Using its refined spatial reasoning, Gemini Robotics-ER 1.6 can be used in logistics and warehousing to identify, count, and move specific items while adhering to gripper constraints, such as avoiding heavy objects or hazardous materials.

Research and Development

Developers can use the Gemini API and Google AI Studio to build responsible AI applications at scale. The model’s ability to use points as intermediate steps for mathematical operations makes it a powerful tool for experimental robotics research.

How to Use Gemini Robotics-ER 1.6

To begin implementing Gemini Robotics-ER 1.6 in your robotics projects, follow these steps:

- Access the Model: Navigate to Google AI Studio or use the Gemini API to access the Gemini Robotics-ER 1.6 model.

- Configuration: Utilize the developer Colab provided by Google DeepMind to understand how to configure the model for embodied reasoning tasks.

- Prompting: Input visual data (images or video streams) and provide prompts that require spatial reasoning or task planning.

- Integration: Set up the model to call necessary tools, such as VLAs or custom code execution functions, to perform high-level tasks like instrument reading or success detection.

- Safety Testing: Leverage the model’s built-in safety policies to ensure your robot adheres to physical constraints and injury risk protocols.

FAQ

Q: How does Gemini Robotics-ER 1.6 differ from Gemini 3.0 Flash? A: While Gemini 3.0 Flash is a powerful baseline, Gemini Robotics-ER 1.6 shows significant improvements in spatial and physical reasoning, specifically in pointing, counting, and success detection. It also introduces the "agentic vision" capability for precise instrument reading which is not as refined in the Flash model.

Q: Can Gemini Robotics-ER 1.6 handle multiple camera views? A: Yes, the model is designed for multi-view reasoning, allowing it to synthesize information from various camera angles to form a coherent understanding of the environment and task progress.

Q: Is Gemini Robotics-ER 1.6 available for public use? A: As of April 14, 2026, the model is available to developers via the Gemini API and Google AI Studio.

Q: What is "Agentic Vision"? A: Agentic vision is a feature in Gemini Robotics-ER 1.6 that combines visual reasoning with code execution. It allows the model to perform intermediate steps like zooming into an image and using mathematical estimates to achieve highly accurate readings of physical instruments.

Q: How does the model ensure physical safety? A: Gemini Robotics-ER 1.6 adheres to strict safety policies regarding adversarial spatial reasoning and physical constraints, such as refusing to handle objects that exceed weight limits or present material hazards.