Mapping the Modern World: How Google Research's S2Vec Learns the Language of Our Cities

Google Research has introduced S2Vec, a novel approach designed to understand and map the complexities of modern urban environments. By treating geographical data and city structures as a form of 'language,' S2Vec utilizes advanced algorithms and theory to learn spatial representations. This development aims to improve how machines interpret the physical world, specifically focusing on the intricate layouts of cities. The research, categorized under Algorithms and Theory, explores the intersection of geospatial data and machine learning, providing a framework for more sophisticated urban modeling and analysis. While the technical specifics remain rooted in foundational theory, the implications for mapping technology and spatial intelligence are significant for the future of geographic information systems.

Key Takeaways

- Google Research introduces S2Vec, a method for learning urban spatial representations.

- The approach treats city layouts and geographical structures as a language to be decoded.

- The research is grounded in Algorithms and Theory to improve modern world mapping.

- S2Vec aims to enhance how AI systems interpret and navigate complex urban environments.

In-Depth Analysis

Decoding Urban Structures through S2Vec

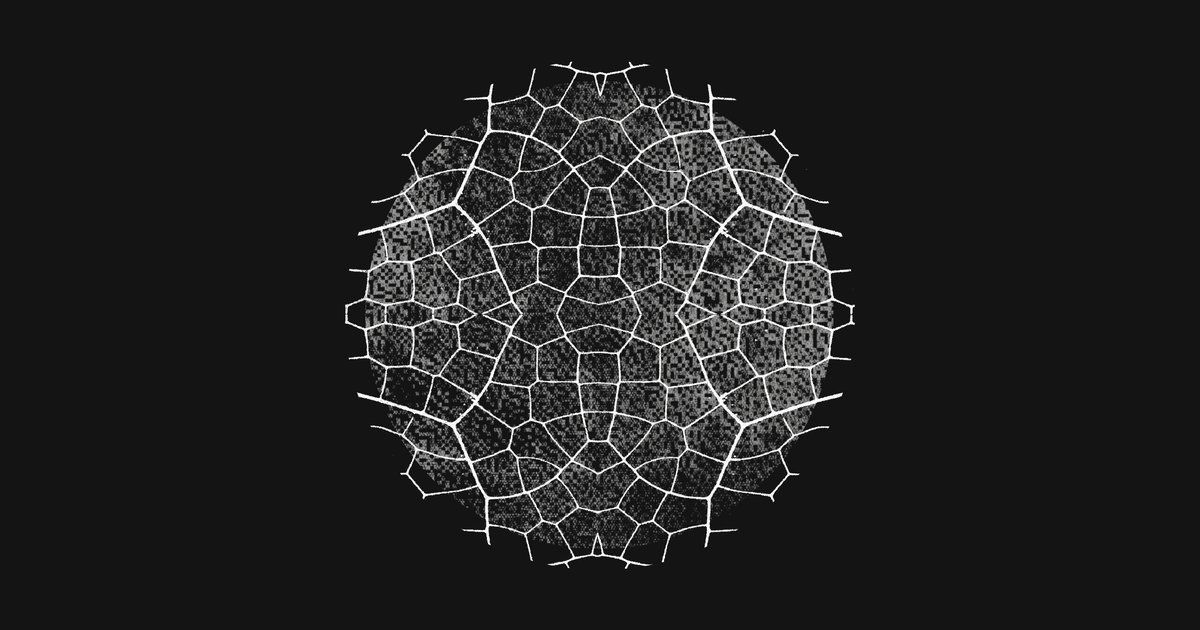

Google Research's S2Vec represents a shift in how urban environments are analyzed by applying linguistic learning principles to physical geography. By conceptualizing the organization of cities as a structured language, the S2Vec model can identify patterns and relationships within urban data that traditional mapping methods might overlook. This theoretical framework allows for a more nuanced understanding of how different elements of a city—such as streets, buildings, and landmarks—interact and form a cohesive spatial narrative.

Algorithmic Foundations of Spatial Learning

The core of S2Vec lies in its reliance on advanced algorithms and theory. By utilizing these mathematical foundations, Google Research is able to create embeddings that represent geographical locations in a high-dimensional space. This process enables the model to learn the 'context' of a location, much like how natural language processing models learn the context of a word within a sentence. This theoretical approach to mapping provides a robust basis for future applications in spatial intelligence and automated urban planning.

Industry Impact

The introduction of S2Vec has significant implications for the geospatial and AI industries. By providing a more sophisticated way to model urban environments, it paves the way for improved navigation systems, more efficient urban resource management, and enhanced location-based services. Furthermore, the application of linguistic-style learning to physical data demonstrates a cross-disciplinary innovation that could influence how other types of non-textual data are processed by machine learning models in the future.

Frequently Asked Questions

What is S2Vec?

S2Vec is a research initiative by Google that focuses on learning the 'language' of cities to create better spatial representations and maps of the modern world.

How does S2Vec interpret city data?

It treats the physical layout and structures of a city as a form of language, using algorithms and theory to understand the relationships between different geographical points.

What field of research does S2Vec fall under?

According to Google Research, S2Vec is primarily categorized under Algorithms and Theory, focusing on the mathematical and theoretical aspects of spatial learning.