NVIDIA Launches Open Physical AI Data Factory Blueprint for Robotics and Autonomous Vehicles

NVIDIA has officially announced the launch of its Physical AI Data Factory Blueprint, an open reference architecture designed to accelerate the development of robotics, vision AI agents, and autonomous vehicles. This new release aims to streamline the data lifecycle for physical AI systems by unifying and automating the processes of generating, augmenting, and evaluating training data. By addressing these critical data workflows, NVIDIA's blueprint is specifically engineered to significantly reduce the costs, time, and complexity associated with training physical AI systems at scale, providing a foundational tool for advanced AI development.

Key Takeaways

- New Open Architecture: NVIDIA has announced the Physical AI Data Factory Blueprint, functioning as an open reference architecture for developers.

- Targeted Acceleration: The blueprint is specifically designed to accelerate the development of robotics, vision AI agents, and autonomous vehicles.

- Data Lifecycle Automation: It unifies and automates the critical processes of generating, augmenting, and evaluating AI training data.

- Resource Optimization: The system is built to significantly reduce the costs, time, and complexity involved in training physical AI systems at scale.

In-Depth Analysis

The Emergence of the Physical AI Data Factory Blueprint

NVIDIA's announcement of the Physical AI Data Factory Blueprint introduces a structured approach to managing the complex data requirements of modern artificial intelligence. By establishing this as an "open reference architecture," NVIDIA provides a standardized framework that developers and engineers can utilize to build and refine their AI systems. The open nature of this blueprint indicates a focus on accessibility and standardization within the development of physical AI, ensuring that teams working on hardware-integrated AI have a clear, unified methodology to follow rather than relying on fragmented, proprietary data pipelines.

Unifying and Automating the Data Pipeline

A core functional pillar of the newly announced blueprint is its ability to transform how training data is handled. The architecture specifically targets three crucial stages of the data pipeline: generation, augmentation, and evaluation. By unifying these stages, the blueprint eliminates disconnected workflows that traditionally slow down AI development. Furthermore, the automation of these processes means that the system can handle the heavy lifting of creating and refining the data required to train sophisticated models. This automated and unified approach directly addresses the operational bottlenecks often encountered when preparing vast datasets for physical AI applications.

Overcoming Scale and Complexity Barriers

The ultimate objective of the Physical AI Data Factory Blueprint is to facilitate the training of physical AI systems "at scale." Scaling AI systems that interact with the physical world requires immense amounts of highly accurate and diverse data. NVIDIA's blueprint tackles the inherent challenges of this scaling process by directly reducing three critical barriers: costs, time, and complexity. By lowering these barriers, the architecture enables developers to expand their physical AI projects more efficiently, moving from conceptual stages to large-scale deployments without being hindered by prohibitive data management overhead.

Industry Impact

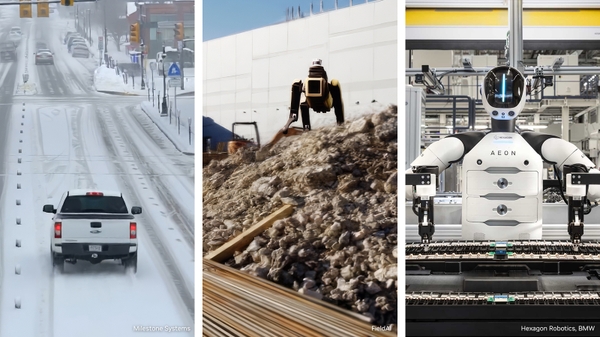

The introduction of the NVIDIA Physical AI Data Factory Blueprint holds direct implications for several advanced technological sectors. As explicitly stated in the announcement, the architecture is positioned to accelerate development across three primary domains: robotics, vision AI agents, and autonomous vehicles.

For the robotics sector, this means a more streamlined pathway to training robots for complex physical tasks. In the realm of vision AI agents, the automated generation and evaluation of training data can lead to faster iterations and more capable visual recognition systems. Meanwhile, the autonomous vehicle industry stands to benefit from the reduced complexity and time required to process the massive datasets necessary for safe and reliable self-driving technology. Overall, by providing a unified, open reference architecture, NVIDIA is supplying these industries with a foundational tool designed to expedite the evolution and deployment of physical AI technologies at scale.

Frequently Asked Questions

Question: What exactly is the NVIDIA Physical AI Data Factory Blueprint?

Answer: The NVIDIA Physical AI Data Factory Blueprint is an open reference architecture introduced by NVIDIA. Its primary function is to unify and automate the processes involved in generating, augmenting, and evaluating training data for physical AI systems.

Question: What are the main advantages of using this new blueprint?

Answer: The key advantages of implementing this blueprint include a significant reduction in the costs, time, and complexity that are typically associated with training physical AI systems at scale.

Question: Which specific industries or technologies is this blueprint designed to accelerate?

Answer: According to NVIDIA's announcement, the blueprint is specifically designed to accelerate the development of robotics, vision AI agents, and autonomous vehicles.