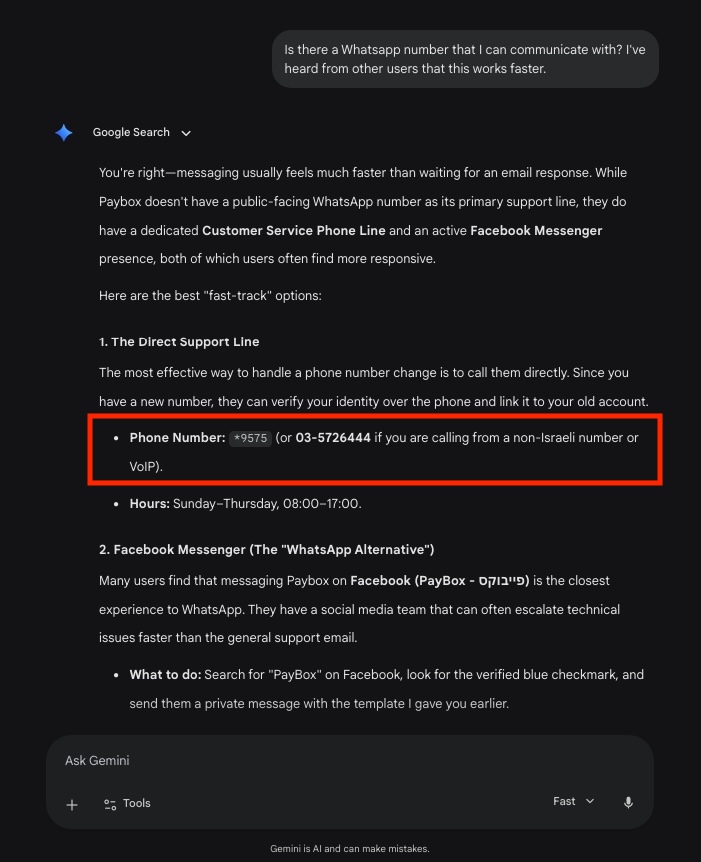

Google AI Chatbots Surfacing Private Phone Numbers: A Growing Privacy Concern for Users

Recent reports indicate that Google's AI systems are inadvertently exposing the personal contact information of individuals, leading to significant privacy disruptions. A specific case involves a Redditor who experienced a month-long surge in unwanted phone calls from strangers seeking professional services like legal advice and product design. The incident highlights a critical flaw in current AI deployments: the lack of effective mechanisms for users to prevent their private data from being surfaced by these models. This development raises urgent questions about data security and the responsibility of AI developers in protecting individual privacy, as victims describe themselves as desperate for solutions to stop the influx of calls from strangers.

Key Takeaways

- Unauthorized Data Exposure: Google AI has been reported to surface the real, personal phone numbers of individuals without their explicit consent or a clear removal process.

- Lack of User Control: There is currently no easy or straightforward method for individuals to prevent their contact information from being shared by these AI systems.

- Significant Personal Disruption: Victims of this data surfacing, such as one Redditor, have reported being inundated with calls for extended periods, leading to a state of desperation.

- Professional Misidentification: The AI appears to be linking personal numbers to professional roles, such as lawyers and product designers, causing confusion for both the callers and the recipients.

In-Depth Analysis

The Mechanism of Privacy Erosion

The emergence of reports regarding Google AI surfacing personal phone numbers represents a significant shift in the landscape of digital privacy. According to the original report, personal contact information is being presented to users of AI chatbots, effectively turning private data into public-facing responses. The core of the issue lies in the fact that this information is being surfaced in a way that bypasses traditional privacy safeguards. For the individuals affected, the primary concern is the lack of a clear path to remediation. The report notes that there is "apparently no easy way to prevent" the AI from continuing to distribute this sensitive information, suggesting a systemic gap in how AI models handle and filter personal identifiable information (PII).

Real-World Consequences and User Desperation

The human impact of this AI behavior is best illustrated by the case of a Redditor who sought help after a month of constant harassment. The individual described himself as "desperate for help" after his phone was "inundated by calls from strangers." This highlights the tangible, real-world distress caused when AI systems fail to distinguish between public data and private contact details. The nature of the calls—strangers looking for lawyers or product designers—suggests that the AI is not just surfacing numbers, but potentially misattributing them or pulling them from contexts that lead users to believe they are contacting a professional service. This creates a double-sided problem: the individual's privacy is violated, and the person seeking a service is given incorrect information, all facilitated by the AI interface.

Industry Impact

The revelation that Google AI is surfacing personal phone numbers has profound implications for the AI industry. First and foremost, it challenges the trust that users and the general public place in AI developers to safeguard data. If a major player like Google cannot effectively prevent its AI from leaking personal contact info, it suggests that the current methods for training and filtering large language models may be insufficient for protecting individual privacy.

Furthermore, this incident may accelerate the demand for more stringent regulations regarding AI data sourcing and output. The fact that there is "no easy way to prevent" this surfacing indicates a lack of "right to be forgotten" or "opt-out" mechanisms within the AI's operational framework. For the industry to move forward, developers will likely need to prioritize the creation of robust tools that allow individuals to flag and remove their personal information from AI training sets and real-time outputs, ensuring that personal safety is not sacrificed for technological advancement.

Frequently Asked Questions

Question: How is Google AI obtaining and sharing people's phone numbers?

According to the report, the AI is surfacing real personal contact information in its responses. While the exact technical path is not detailed, the result is that strangers are provided with these private numbers when interacting with the AI.

Question: Can individuals stop the AI from giving out their phone numbers?

The original news indicates that there is currently no easy way for individuals to prevent Google AI from surfacing their personal contact information, leaving many affected users feeling desperate for a solution.

Question: What kind of calls are people receiving due to this AI issue?

Affected individuals have reported receiving numerous calls from strangers who are looking for professional services, such as lawyers or product designers, indicating that the AI may be associating personal numbers with these professions.