Netflix Unveils VOID: A Physics-Based Approach to Video Editing and Object Removal

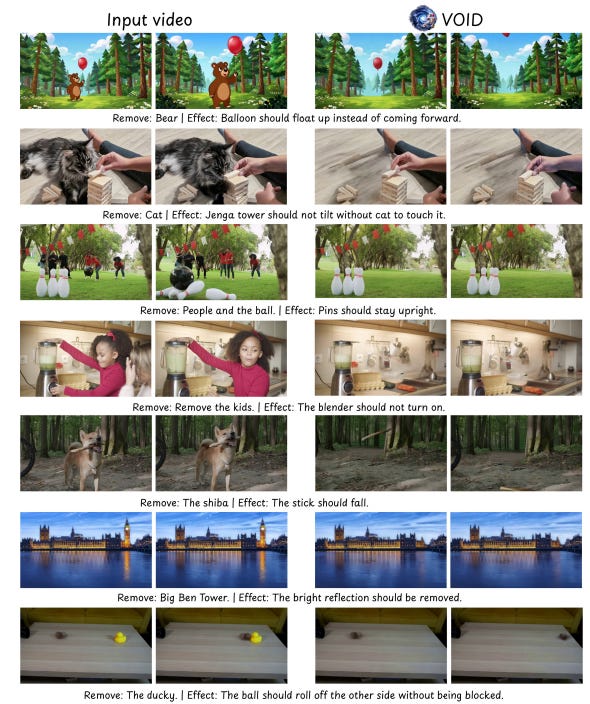

Netflix has introduced VOID, a groundbreaking video editing technology that shifts the paradigm of object removal from traditional pixel-patching to causal simulation. By treating the editing process as a simulation of physical laws, VOID effectively eliminates the common issue of "ghost" physics—visual artifacts or inconsistencies that often remain after an object is digitally removed from a scene. This development signifies a major leap in video post-production, ensuring that edited footage maintains the structural and physical integrity of the original environment. The technology focuses on understanding the underlying physics of a scene to create more realistic and seamless transitions, marking a significant departure from previous generative AI methods that relied solely on visual pattern matching.

Key Takeaways

- Physics-First Editing: VOID treats object removal as a causal simulation rather than simple pixel manipulation.

- Elimination of Artifacts: The system successfully removes "ghost" physics, ensuring edited scenes remain visually and physically consistent.

- Advanced Causal Simulation: By understanding the cause-and-effect of physical elements, the tool provides a more realistic output than traditional patching methods.

- Netflix Innovation: This technology represents Netflix's latest push into high-fidelity AI-driven video post-production tools.

In-Depth Analysis

Moving Beyond Pixel-Patching

Traditional video editing and object removal techniques have long relied on "pixel-patching," a process where the software attempts to fill in the gap left by a removed object by sampling surrounding pixels or using generative textures. While often effective for static backgrounds, this method frequently fails in dynamic scenes where light, shadow, and motion are interconnected. Netflix's VOID (Video Object Inpainting & Deletion) changes this approach by utilizing causal simulation. Instead of just looking at what the pixels should look like, the system simulates the physical environment to determine how the scene would naturally appear if the object had never existed.

Solving the Problem of "Ghost" Physics

One of the most persistent challenges in digital video editing is the presence of "ghost" physics—remnants of a removed object's influence on its surroundings, such as lingering shadows, reflections, or interrupted motion paths. Because VOID operates on the principles of physics and causality, it identifies these dependencies. By treating the removal as a physical event within a simulated space, the technology ensures that the environmental factors tied to the removed object are also adjusted, resulting in a clean, artifact-free sequence that adheres to the laws of physics.

Industry Impact

The introduction of VOID has significant implications for the film and television industry, particularly in post-production efficiency. By automating the correction of physical inconsistencies that previously required frame-by-frame manual adjustment, Netflix is setting a new standard for AI-assisted editing. This move suggests a shift in the AI industry toward "physics-aware" models, where generative tools are no longer just mimicking visual styles but are beginning to understand the fundamental rules of the physical world. This could lead to more immersive visual effects and lower costs for high-quality content creation.

Frequently Asked Questions

Question: What makes VOID different from traditional AI video editing tools?

Unlike traditional tools that use pixel-patching to fill in gaps, VOID uses causal simulation to treat object removal as a physical event, ensuring the laws of physics are maintained in the edited scene.

Question: What are "ghost" physics in video editing?

"Ghost" physics refer to visual inconsistencies or artifacts, such as shadows or reflections, that remain in a video after an object has been digitally removed. VOID is designed specifically to eliminate these issues.

Question: Who developed the VOID technology?

VOID was developed by Netflix as a solution to improve the realism and physical accuracy of video object removal and editing.