Google Research Explores the Optimization of AI Benchmarks: Determining the Ideal Number of Raters

A recent publication from Google Research, titled 'Building better AI benchmarks: How many raters are enough?', delves into the critical methodologies behind evaluating artificial intelligence. Published under the Algorithms & Theory category, the research addresses a fundamental challenge in the AI industry: the reliability of human-led benchmarking. By examining the statistical necessity of rater volume, the study aims to provide a framework for creating more accurate and efficient evaluation metrics. This analysis is pivotal for developers and researchers who rely on human feedback to fine-tune large language models and other algorithmic systems, ensuring that benchmarks are both robust and resource-effective.

Key Takeaways

- Google Research investigates the optimal number of human raters required to establish reliable AI benchmarks.

- The study is categorized under Algorithms & Theory, focusing on the mathematical foundations of evaluation.

- Proper rater scaling is identified as a core component in building better, more consistent AI performance metrics.

- The research aims to balance the trade-off between benchmark accuracy and the resources required for human evaluation.

In-Depth Analysis

The Challenge of AI Benchmarking

In the current landscape of artificial intelligence, benchmarks serve as the primary yardstick for progress. However, as Google Research points out in their latest exploration of Algorithms & Theory, the human element in these benchmarks introduces variability. The central question—'How many raters are enough?'—addresses the need for statistical significance in human-labeled datasets. Without a standardized approach to the number of raters, benchmarks risk being either under-powered (leading to inaccurate results) or inefficiently over-resourced.

Algorithmic Foundations for Human Rating

By situating this research within the realm of Algorithms & Theory, Google emphasizes that human rating is not just a logistical task but a theoretical one. The research suggests that determining the ideal rater count involves complex calculations to ensure that the consensus reached by a group of humans truly reflects the quality of an AI's output. This methodology is essential for refining how models are tested against human intuition and factual correctness, providing a more scientific basis for what has traditionally been a subjective process.

Industry Impact

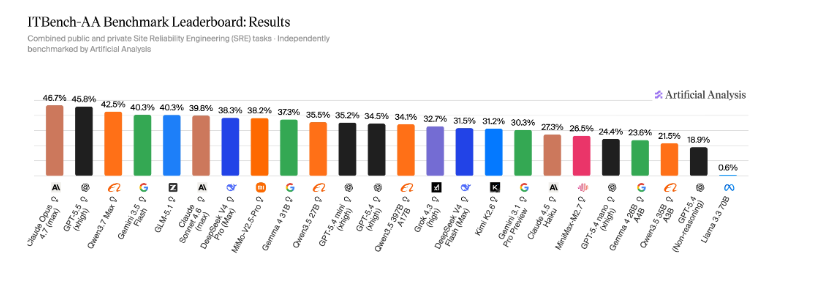

The implications of this research for the AI industry are significant. As companies race to release more advanced models, the pressure to provide 'proof' of superiority through benchmarks has never been higher. By establishing a clearer guideline on rater volume, Google Research provides a path toward more standardized and trustworthy industry benchmarks. This helps prevent 'benchmark saturation' and ensures that when a model claims improvement, the data backing that claim is statistically sound. Furthermore, it allows smaller research entities to optimize their evaluation budgets by using the minimum number of raters necessary to achieve valid results.

Frequently Asked Questions

Question: Why is the number of raters important for AI benchmarks?

The number of raters determines the reliability and statistical power of a benchmark. Too few raters can lead to biased or inconsistent results, while too many can lead to unnecessary costs and time delays in model development.

Question: What field of study does this Google Research fall under?

This research is categorized under Algorithms & Theory, indicating it focuses on the mathematical and theoretical frameworks used to improve AI evaluation processes.