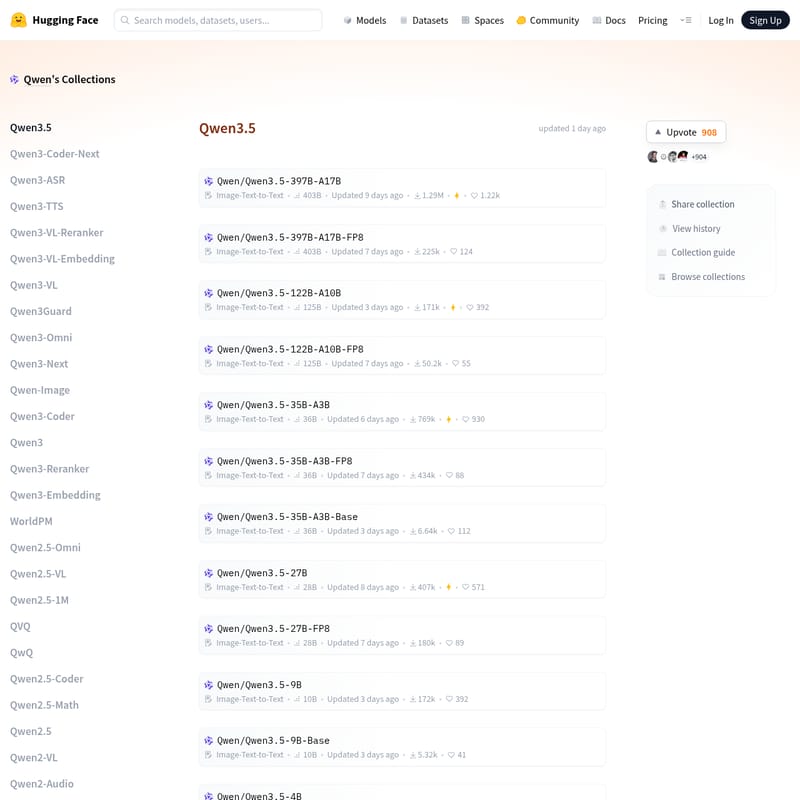

Qwen3.5 Small

Qwen3.5: Advanced Multi-Modal AI Models for Image-Text-to-Text Processing and Large Language Tasks

The Qwen3.5 collection represents the latest evolution in multi-modal artificial intelligence, offering a diverse range of models optimized for Image-Text-to-Text tasks. From the massive Qwen3.5-397B-A17B with 403 billion parameters to highly efficient mobile-ready versions like the Qwen3.5-0.8B, this series covers diverse computational needs. Hosted on Hugging Face, Qwen3.5 integrates specialized versions including FP8 and GPTQ-Int4 quantizations, Base models, and specialized iterations like Qwen3.5-Coder and Qwen3.5-VL for vision-language capabilities. Whether for complex reasoning or localized edge computing, Qwen3.5 provides cutting-edge performance.

2026-03-05

24947.7K

Qwen3.5 Small Product Information

Discover Qwen3.5: The Comprehensive Multi-Modal AI Collection

The Qwen3.5 ecosystem stands as a pinnacle of modern artificial intelligence, offering a wide array of models designed for high-performance Image-Text-to-Text processing. Developed as part of the broader Qwen family, this latest iteration introduces significant advancements in parameter efficiency, multi-modal understanding, and specialized task execution.

With models ranging from ultra-lightweight versions to massive flagship architectures, Qwen3.5 empowers developers, researchers, and enterprises to implement state-of-the-art AI across various hardware environments.

What's Qwen3.5?

Qwen3.5 is a versatile collection of large language and multi-modal models hosted on Hugging Face. It serves as a successor to the highly successful Qwen2 and Qwen2.5 series. The primary focus of the Qwen3.5 series is the Image-Text-to-Text modality, allowing the models to process complex visual inputs alongside textual data to generate coherent, context-aware responses.

This collection includes several tiers based on parameter counts and optimization techniques:

- Flagship Models: Such as the Qwen3.5-397B-A17B, boasting over 400 billion parameters for extreme reasoning tasks.

- Mid-Range Models: Including the Qwen3.5-122B-A10B and Qwen3.5-35B-A3B, balancing power and efficiency.

- Compact Models: Such as Qwen3.5-9B, Qwen3.5-4B, and Qwen3.5-2B, ideal for more constrained environments.

- Edge-Ready Models: The Qwen3.5-0.8B, designed for speed and low latency.

Features of Qwen3.5

Multi-Modal Versatility

The core strength of Qwen3.5 lies in its Image-Text-to-Text capabilities. These models are not just limited to text; they can interpret images, making them suitable for visual QA, document analysis, and scene description.

Diverse Quantization Options

To ensure accessibility across different hardware configurations, Qwen3.5 provides multiple quantization formats:

- FP8 Versions: Optimized for high-speed inference with minimal precision loss.

- GPTQ-Int4 Versions: Significantly reduced memory footprint, allowing large models like Qwen3.5-27B-GPTQ-Int4 to run on consumer-grade GPUs.

Specialized Task Handling

The Qwen ecosystem extends beyond general chat. The collection includes specialized branches such as:

- Qwen3.5-Coder: Tailored for software development and programming tasks.

- Qwen3.5-VL (Vision-Language): Enhanced for visual understanding and grounding.

- Qwen3-Math and Qwen3-Audio: Specialized for mathematical reasoning and auditory data processing.

Scalability and Accessibility

From the massive 403B parameter counts down to 0.9B, Qwen3.5 offers a model for every use case. The inclusion of "Base" models allows for further fine-tuning by the community, while the instruct-tuned versions are ready for immediate deployment.

Use Case Scenarios for Qwen3.5

Enterprise-Level Reasoning

Using the Qwen3.5-397B-A17B, organizations can process massive datasets, perform complex strategic analysis, and handle multi-step logical reasoning that requires a deep understanding of both visual and textual context.

Mobile and Edge Computing

With the Qwen3.5-0.8B and Qwen3.5-2B models, developers can integrate powerful AI directly into mobile applications or IoT devices, providing offline intelligence without relying on cloud infrastructure.

Visual Content Creation and Analysis

Thanks to the Image-Text-to-Text architecture, Qwen3.5 can be used to generate detailed descriptions of images, help visually impaired users understand their surroundings, or extract structured data from scanned documents and infographics.

Programming and Technical Support

The Qwen3.5-Coder variant is specifically designed to assist in writing, debugging, and explaining code across various programming languages, significantly boosting developer productivity.

FAQ

What is the difference between Qwen3.5 and Qwen2.5?

Qwen3.5 represents the latest generation with improved multi-modal capabilities (Image-Text-to-Text) and refined parameter architectures compared to the Qwen2.5 and Qwen2 series.

What does FP8 and GPTQ-Int4 mean for these models?

These are quantization techniques. FP8 provides a balance of speed and precision, while GPTQ-Int4 compresses the model significantly, allowing larger models like Qwen3.5-122B to be used on hardware with less VRAM.

Are there base models available for fine-tuning?

Yes, the collection includes Base versions for most parameter sizes (e.g., Qwen3.5-27B-Base), which are ideal for developers looking to train the model on specific domain data.

Can Qwen3.5 handle audio or math specifically?

While the main Qwen3.5 models are multi-modal for image and text, the collection includes specialized versions like Qwen3-Math, Qwen3-Audio, and Qwen3-ASR to handle those specific niches.

Where can I find the documentation for Qwen3.5?

All Qwen3.5 models, including their model cards, datasets, and community discussions, are hosted on Hugging Face under the official Qwen repository.