OpenAI’s GPT-5.x Achieves Breakthrough Results in Theoretical Physics and Quantum Gravity Research

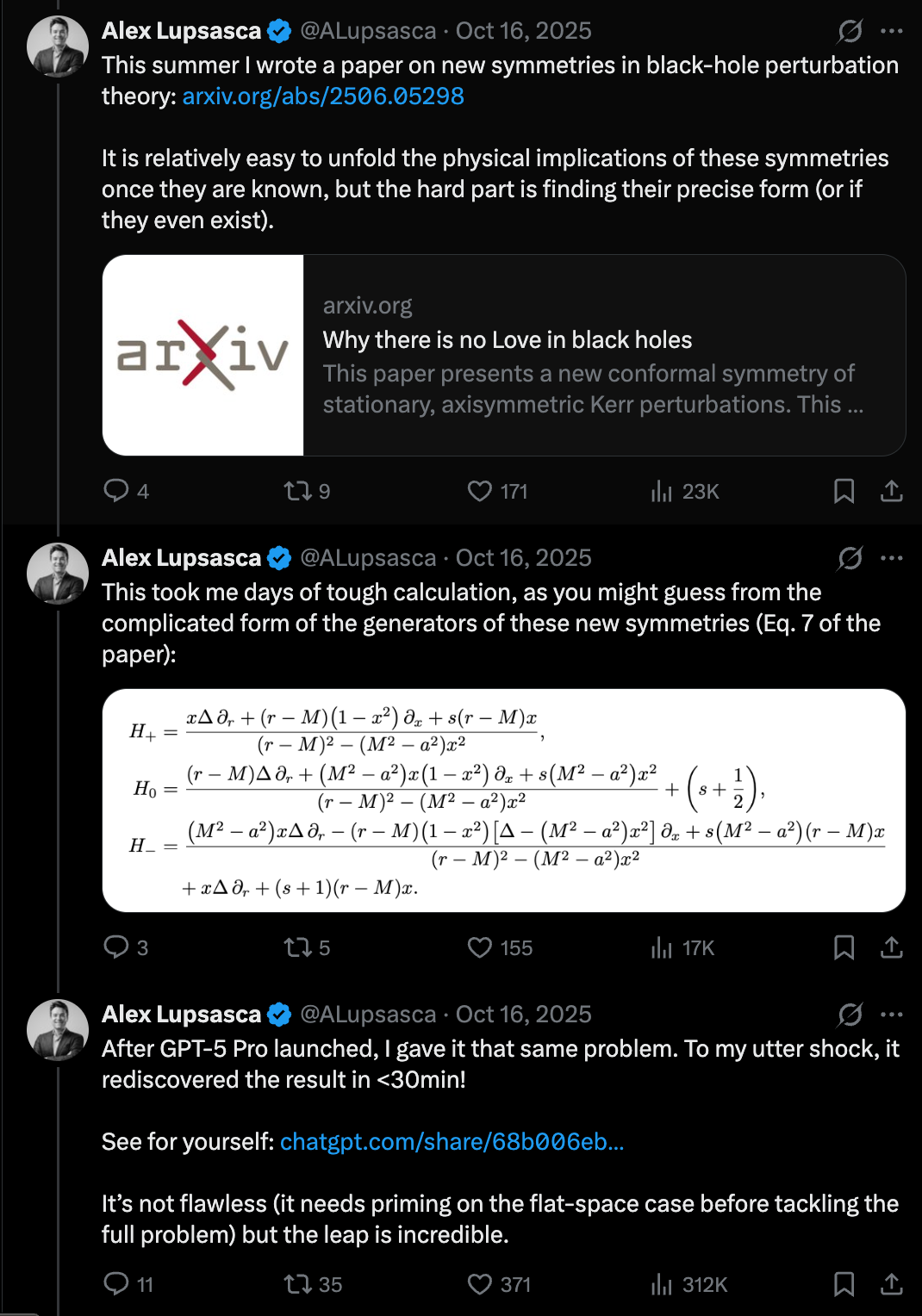

In a significant revelation shared via Latent Space, Alex Lupsasca of OpenAI has detailed how the upcoming GPT-5.x model has successfully derived new results within the fields of theoretical physics and quantum gravity. This milestone marks a transition from AI acting as a general-purpose assistant to becoming a primary driver of scientific discovery in highly complex, mathematical domains. The discussion, titled 'Doing Vibe Physics,' explores the narrative behind these derivations, suggesting that the 'vibe' or intuition-led approach of large language models is now yielding rigorous, verifiable scientific output. This development represents a major leap in the capabilities of the GPT-5.x architecture, specifically its ability to navigate the intricate logical and mathematical frameworks required for quantum gravity research.

Key Takeaways

- Scientific Milestone: GPT-5.x has moved beyond information retrieval to derive entirely new results in theoretical physics.

- Quantum Gravity Focus: The model's breakthroughs are specifically situated in the domain of quantum gravity, one of the most challenging areas of modern science.

- OpenAI's Research Progress: Alex Lupsasca from OpenAI provides the primary account of this development, highlighting the model's advanced reasoning capabilities.

- From Intuition to Rigor: The process, described as 'Doing Vibe Physics,' suggests a unique methodology where AI intuition leads to formal scientific discovery.

In-Depth Analysis

The Evolution of GPT-5.x in Theoretical Research

The announcement that GPT-5.x has derived new results in theoretical physics signifies a fundamental shift in the utility of Large Language Models (LLMs). Previously, AI models were primarily utilized for summarizing existing literature, coding assistance, or solving established mathematical problems. However, the 'full story' presented by Alex Lupsasca indicates that GPT-5.x is now capable of original derivation. This implies that the model's underlying architecture has reached a level of logical consistency and symbolic reasoning sufficient to contribute to the frontiers of human knowledge. The use of the '5.x' designation suggests an iterative but powerful advancement over previous iterations, optimized for the high-dimensional reasoning required in the hard sciences.

Quantum Gravity: The Ultimate Benchmark for AI

Quantum gravity represents the effort to unify general relativity with quantum mechanics—a task that has eluded the world's most brilliant physicists for decades. By deriving new results in this specific field, GPT-5.x demonstrates a capacity to handle extreme abstraction and complex mathematical structures. The significance of this cannot be overstated; quantum gravity requires not just computational power, but a deep 'understanding' of theoretical frameworks that do not always have empirical data to guide them. The fact that an AI can navigate these theoretical waters suggests that OpenAI has successfully bridged the gap between linguistic pattern matching and formal scientific logic.

Understanding 'Vibe Physics' and Formal Derivation

The title of the discussion, 'Doing Vibe Physics,' points toward a novel intersection of AI intuition and scientific rigor. In the context of LLMs, 'vibes' often refer to the probabilistic 'feel' or direction the model takes based on its training data. When applied to physics, this suggests that GPT-5.x may be using a form of high-level heuristic reasoning to identify potential theoretical breakthroughs before formalizing them into verifiable results. This methodology could redefine the scientific method, where AI-driven 'intuition' serves as a precursor to rigorous mathematical proof, effectively accelerating the pace of discovery in theoretical disciplines.

Industry Impact

The implications for the AI industry and the broader scientific community are profound. First, this establishes a new benchmark for 'General Intelligence' in AI; if a model can contribute to quantum gravity, its reasoning capabilities are likely applicable to any complex field, from drug discovery to materials science. Second, it positions OpenAI not just as a software company, but as a core contributor to fundamental science. This may lead to a new era of 'AI-Scientist' collaborations, where human researchers use models like GPT-5.x to explore theoretical landscapes that were previously too dense or complex for human cognition alone. The transition from 'generative AI' to 'discovery AI' is now officially underway.

Frequently Asked Questions

Question: What is GPT-5.x?

GPT-5.x refers to an advanced iteration of OpenAI’s Generative Pre-trained Transformer models. Based on the report, this version features enhanced reasoning capabilities that allow it to derive original scientific results rather than just processing existing information.

Question: What does 'deriving new results' mean in this context?

In theoretical physics, deriving new results means using mathematical logic and existing theories to produce new formulas, theorems, or insights that were not previously known or documented. This indicates the AI is performing original research.

Question: Why is the focus on Quantum Gravity significant?

Quantum gravity is considered one of the 'holy grails' of physics. It is notoriously difficult because it requires reconciling two seemingly incompatible theories (General Relativity and Quantum Mechanics). Success in this field proves the AI's high-level abstract reasoning skills.