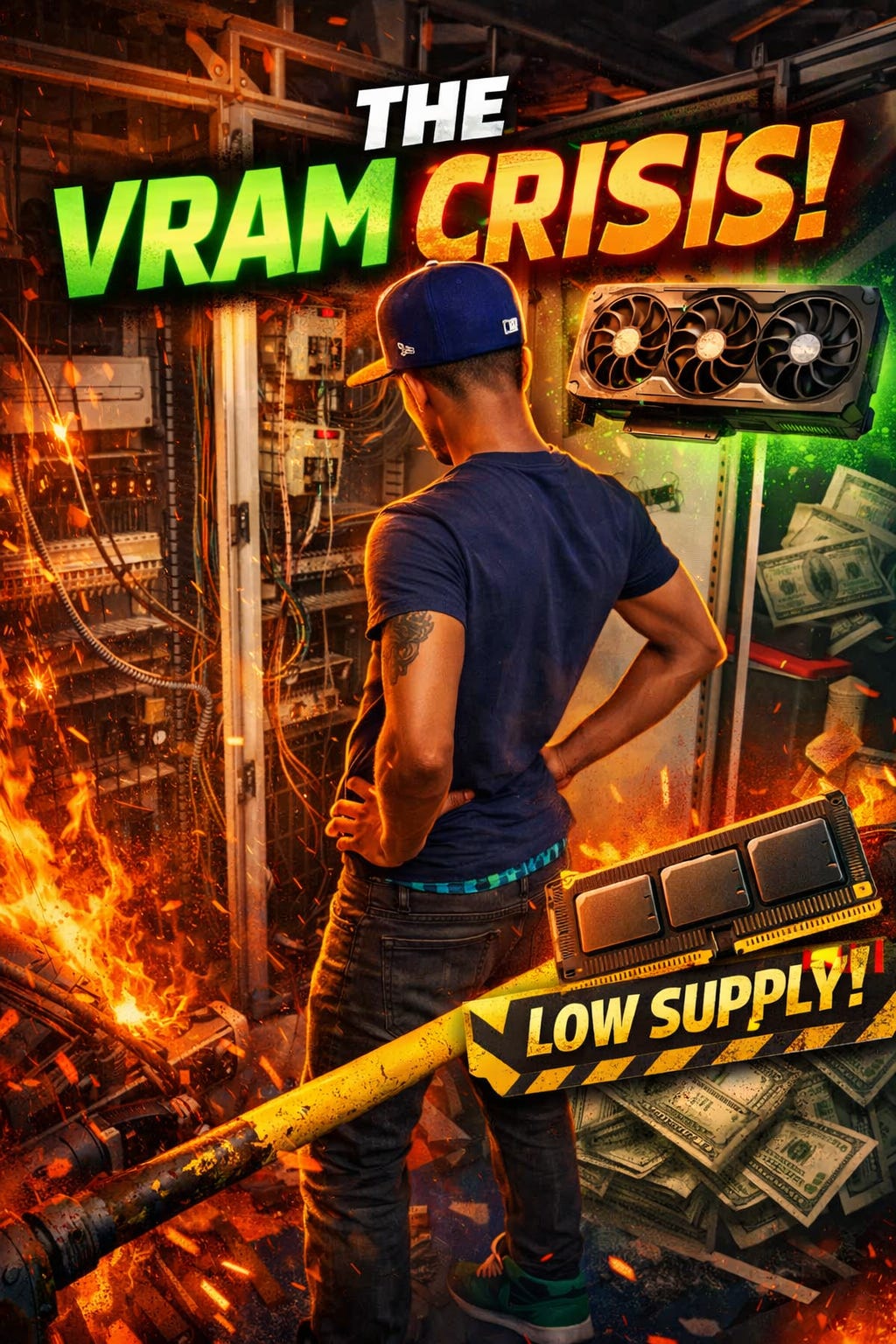

Google Gemma 4 31B Analysis: High-Capacity 256K Context Window Meets Significant VRAM Demands

Google has introduced Gemma 4 31B, positioned as its most advanced open model to date. While the model boasts an impressive 256K context window, allowing for the processing of extensive datasets and long-form content, this capability comes with a significant trade-off. Early reports indicate that utilizing the full extent of this memory capacity results in a substantial VRAM (Video Random Access Memory) requirement. This development highlights the ongoing tension in AI hardware efficiency, where expanded model memory directly correlates with increased computational costs. Users looking to leverage the model's full potential must account for the high hardware overhead associated with its expansive context window.

Key Takeaways

- Expansive Context Window: Gemma 4 31B features a 256K context window, marking a significant milestone for Google's open model series.

- High Hardware Requirements: The model's large memory capacity leads to a substantial VRAM bill for users.

- Performance vs. Cost: While the model is Google's best open offering yet, its utility is tied to high-end hardware availability.

In-Depth Analysis

The 256K Context Breakthrough

Google's Gemma 4 31B represents a significant leap in the capabilities of open-source AI models. By providing a 256K context window, the model allows developers and researchers to input massive amounts of data—equivalent to several books or extensive codebases—in a single prompt. This capability is designed to compete with the largest proprietary models, offering a level of "memory" that was previously restricted to closed-door enterprise solutions. The 31B parameter count suggests a balance between raw power and deployability, though the context window pushes the boundaries of typical consumer-grade hardware.

The VRAM Challenge and Resource Consumption

Despite the technical achievement of the 256K window, the practical application of Gemma 4 31B is constrained by its high VRAM requirements. In the world of Large Language Models (LLMs), context length is not "free"; as the window expands, the memory required to store the KV (Key-Value) cache grows significantly. For Gemma 4 31B, utilizing the full 256K context results in a "VRAM bill" that may be prohibitive for many users. This creates a bottleneck where only those with access to high-tier data center GPUs can fully exploit the model's primary feature, highlighting a growing divide between model capability and hardware accessibility.

Industry Impact

The release of Gemma 4 31B underscores a critical trend in the AI industry: the shift toward long-context models as a standard requirement. By releasing this as an open model, Google is putting pressure on other developers to match these specifications. However, the high VRAM cost associated with this specific model serves as a reminder that software optimization for long context is still trailing behind the theoretical limits of model architecture. This will likely drive further innovation in quantization and memory-efficient attention mechanisms as the industry seeks to make 256K+ context windows more sustainable for broader use.

Frequently Asked Questions

Question: What is the context window size of Gemma 4 31B?

As reported, Gemma 4 31B features a 256K context window, which is a significant feature of this specific open model release.

Question: What is the main drawback of using the full context window in Gemma 4 31B?

The primary drawback is the high VRAM requirement. Utilizing the full 256K context window leads to a substantial increase in the hardware resources needed to run the model.

Question: Is Gemma 4 31B considered an open model?

Yes, the model is described as Google's best open model yet, though its high hardware demands may limit who can effectively run it.