Rethinking Continual Learning for AI Agents: Beyond Model Weight Updates to a Three-Layer Architecture

In a recent analysis by Harrison Chase of LangChain, the concept of continual learning for AI agents is redefined beyond the traditional focus on model weight updates. While most industry discussions center on fine-tuning models, Chase argues that for AI agents to truly improve over time, learning must occur across three distinct layers: the model, the harness, and the context. This framework shifts the perspective on how developers should build and optimize agentic systems. By understanding these layers, creators can implement more effective strategies for long-term system evolution. The insights provided suggest that the future of adaptive AI lies in a holistic approach to learning that integrates architectural components with environmental data and core model capabilities.

Key Takeaways

- Continual learning in AI agents is not limited to updating model weights.

- Learning occurs across three distinct layers: the model, the harness, and the context.

- Understanding these layers is essential for building AI systems that improve over time.

- The traditional focus on model-centric learning is only one part of the agentic evolution puzzle.

In-Depth Analysis

The Shift from Model-Centric Learning

Traditionally, the AI industry has equated "continual learning" almost exclusively with the process of updating model weights through fine-tuning or retraining. However, Harrison Chase posits that this view is too narrow when applied to AI agents. While the underlying model provides the foundational intelligence, it is only one component of a larger system that must adapt to new information and changing environments.

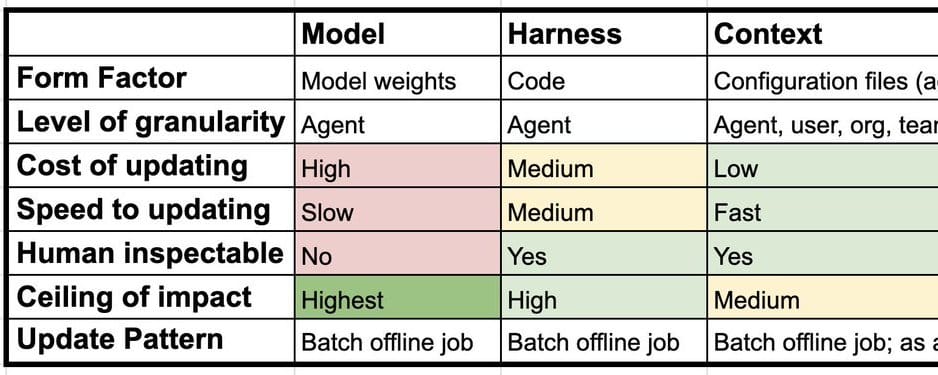

The Three Layers of Agentic Learning

To build systems that genuinely evolve, developers must recognize three specific layers where learning takes place:

- The Model Layer: This involves the core large language model (LLM) and the traditional methods of updating its internal parameters to improve performance or knowledge.

- The Harness Layer: This refers to the framework, code, and logic that surround the model, dictating how it interacts with tools, executes tasks, and processes inputs.

- The Context Layer: This involves the dynamic data, memory, and environmental information that the agent uses during its operation to inform its decision-making process.

Industry Impact

This framework has significant implications for the AI industry, particularly for developers working on autonomous agents. By decoupling learning into these three layers, organizations can move away from the computationally expensive and slow process of constant model retraining. Instead, they can focus on optimizing the 'harness' and 'context' layers to achieve faster, more efficient improvements in agent performance. This approach encourages the development of more modular and maintainable AI architectures, where the 'brain' (model), the 'body' (harness), and the 'memory' (context) can all be refined independently to contribute to the overall growth of the system.

Frequently Asked Questions

Question: Is updating model weights the only way for an AI agent to learn?

No. According to the analysis, learning also happens within the harness (the system logic) and the context (the data and memory the agent interacts with).

Question: Why is understanding the three layers important for developers?

It changes the fundamental approach to building systems. Instead of just focusing on the model, developers can create systems that improve over time by optimizing how the agent uses context and how the surrounding harness is structured.