Google Expands Live Translate Feature for Headphones to iOS and Global Markets

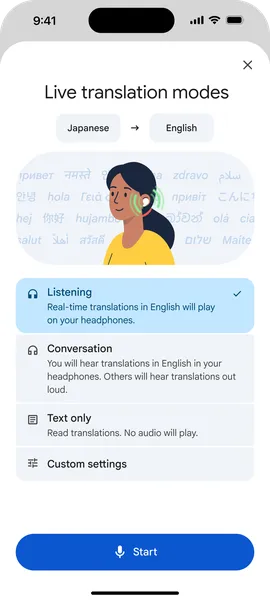

Google has officially announced the arrival of its Live Translate feature for headphones on the iOS platform. Previously limited in scope, this expansion allows iPhone users to transform their headphones into personal real-time translators. Alongside the iOS launch, Google is also expanding the availability of this capability to more countries for both iOS and Android users. This update marks a significant step in cross-platform accessibility for Google's translation technology, enabling more seamless communication across different languages and regions using wearable audio devices.

Key Takeaways

- iOS Launch: Google Translate’s Live Translate feature for headphones is now officially available on iOS devices.

- Global Expansion: The capability is being rolled out to a wider range of countries worldwide.

- Cross-Platform Support: Both iOS and Android users now have access to expanded Live Translate features via their headphones.

- Real-Time Translation: The update focuses on turning standard headphones into live personal translation tools.

In-Depth Analysis

Bridging the Platform Gap

For a significant period, certain advanced Google Translate features were optimized for the Android ecosystem. The official arrival of Live Translate with headphones on iOS represents a strategic move by Google to provide a consistent user experience regardless of the mobile operating system. By bringing this technology to iOS, Google ensures that iPhone users can leverage their existing hardware—specifically headphones—to facilitate real-time, multilingual conversations. This integration utilizes the processing power of the mobile device and the audio interface of the headphones to create a seamless translation loop.

Global Accessibility and Reach

Beyond the platform expansion, Google is simultaneously increasing the geographical footprint of this service. By expanding the capability to more countries, Google is addressing the needs of international travelers and multilingual communities globally. This expansion for both iOS and Android users suggests a focus on scaling the infrastructure behind Live Translate to handle more languages and regional nuances, ensuring that the "personal translator" experience is available to a broader demographic than ever before.

Industry Impact

The expansion of Live Translate to iOS and additional global markets signifies a shift toward hardware-agnostic AI services. In the AI industry, the ability to provide high-utility features like real-time translation across competing platforms (iOS vs. Android) increases user retention and data diversity. This move also puts pressure on other tech giants to offer comparable cross-platform accessibility for their AI-driven communication tools. As wearable technology continues to grow, the transformation of simple audio devices into sophisticated AI assistants—capable of breaking down language barriers—sets a new standard for mobile productivity and global communication.

Frequently Asked Questions

Question: Is the Live Translate feature available for all headphones on iOS?

According to the announcement, Google Translate’s Live Translate with headphones is officially arriving on iOS, though specific hardware requirements for different headphone models are typically managed through the Google Translate app interface.

Question: Which countries can now access this feature?

Google has stated they are expanding the capability to even more countries for both iOS and Android users, though the specific list of newly added countries was not detailed in the initial announcement.

Question: Does this update apply to Android users as well?

Yes. While the headline highlights the iOS arrival, the expansion of the capability to more countries applies to both iOS and Android users simultaneously.